library(tidyverse)

library(HistData)

library(readxl)

library(unvotes)

library(janitor)

dta_dop_affordable_housing<-read_csv("./Data/Affordable_Housing_by_Town.csv")

dta_playfair<-Wheat

tmp_dta_titanic_passengers_bio<-read_csv("./Data/titanic bio data.csv")

tmp_dta_titanic_passengers_ticket<-read_excel("./Data/titanic ticket data.xlsx")

dta_titanic_passengers<-left_join(tmp_dta_titanic_passengers_ticket,tmp_dta_titanic_passengers_bio)

#from: https://ilostat.ilo.org/methods/concepts-and-definitions/classification-occupation/

dta_isco08_definitions<-read_excel("./Data/ISCO-08 EN Structure and definitions.xlsx") |>

clean_names()

#General Social Survey (GSS) data from NORC, the University of Chicago (gss_cat from the forcats package)

dta_norc_gss<-read_csv("./Data/NORC - General Social Survey (GSS).csv")

dta_un_roll_calls<-un_roll_calls

dta_un_roll_call_issues<- un_roll_call_issues

dta_un_votes<- un_votesBackground

About this guide

This guide is designed to provide an accessible introduction to the R programming language for policy analysis. While it is designed to complement the course An Introduction to R for Policy Analysis, it is meant as a standalone guide for newcomers to the R programming language.

Reflecting the realities of real-world policy analysis, and that the best way to learn R is by doing R, this book is designed to be a practice manual for using R. The topics have therefore been introduced using real-world data sets and just enough theory to get you started working with data.

Unlike many technical manuals, this guide acknowledges the messiness of real-world data and the iterative nature of policy analysis. Rather than presenting idealized “best practice principles” in isolation, we focus on equipping you with practical strategies for tackling the common challenge faced in policy analysts and research: fielding ambiguous questions with limited access to data under tight deadlines.

Throughout this guide, you’ll find practical examples drawn from policy-relevant domains such as economics, public health, education, and environmental analysis. With each chapter introducing slightly more sophisticated techniques for working with data and answering policy questions.

The guide begins by providing an introduction to the R language and how it relates to applied policy analysis. We then move to learning how to import, clean and explore data before moving to producing visualizations, statistical modelling and tools for automating your workflow. This guide also covers a topic I wish I was introduced to as a newcomer, such as building a legible and reproducible analysis workflow, troubleshooting errors and deciding whether you need to use R for an analysis task.

This book is a work in progress and will be updated over time, but comments, corrections and suggestions are welcome.

How AI was used: The first draft of this guide was written by me (a human) based on the course An introduction to R for Policy Analysis. AI tools were used to help better communicate ideas and organize content, but the final guide (and any mistakes) are my own.

Focus Datasets

A selection of datasets will be used to illustrate the concepts covered in this book. Where possible, datasets have been selected that are both policy relevant and in a format similar to what might be found in real-world policy analysis. In other cases, datasets have been selected because they’re interesting (or fun) to work with.

Titanic passenger survival data sourced from Frank Harrell Jr’s “R Workflow for Reproducible Data Analysis and Reporting” (link).

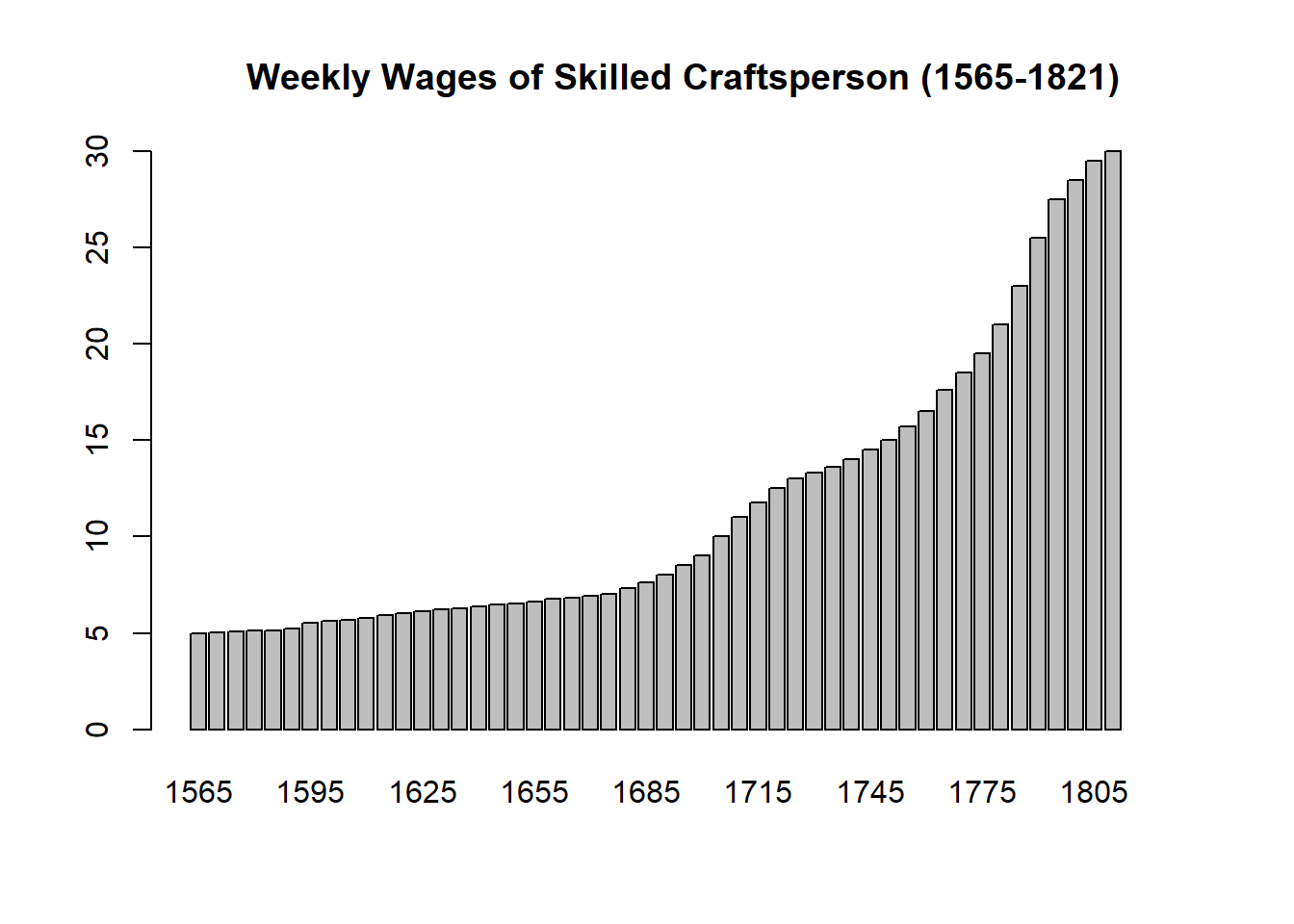

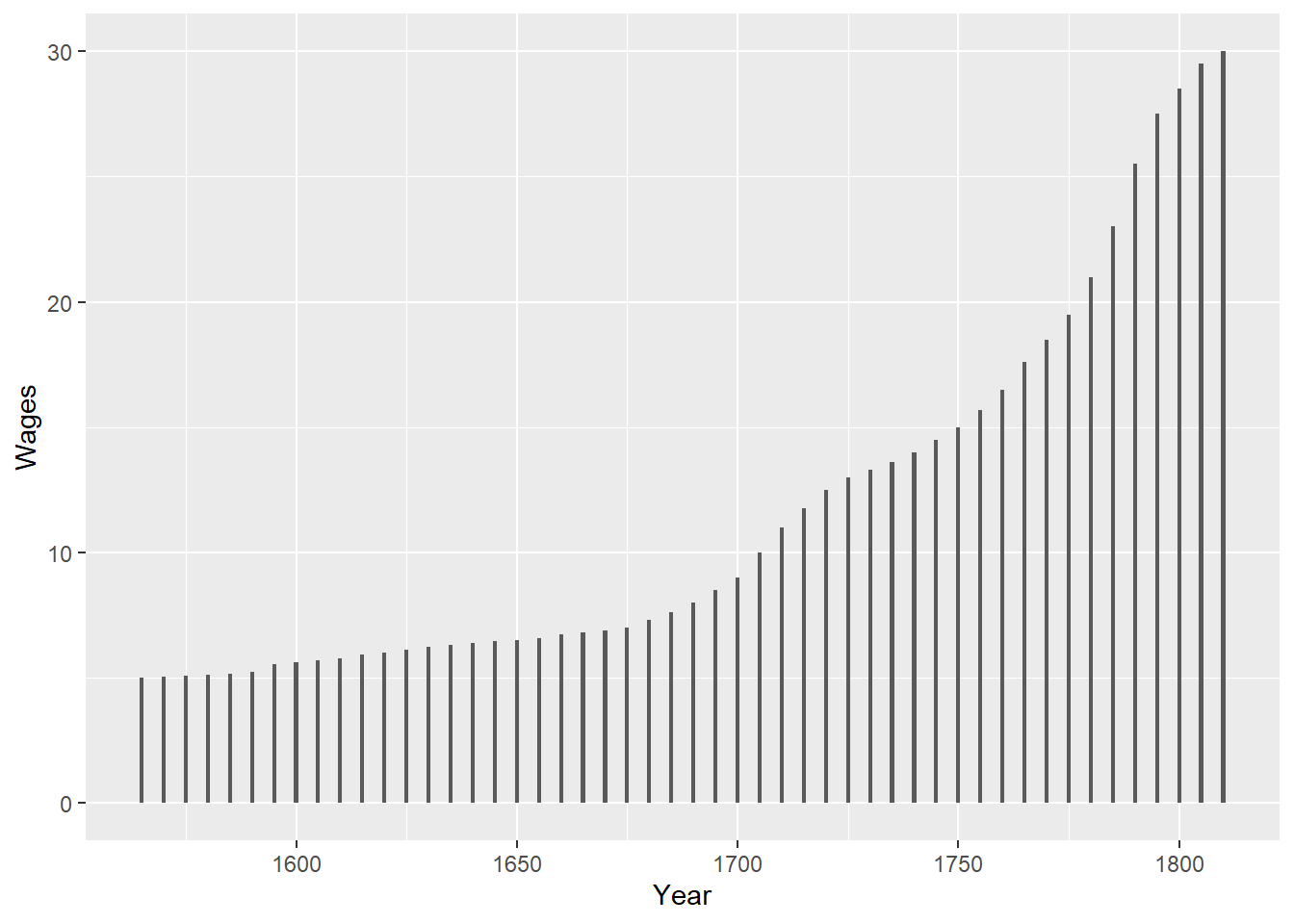

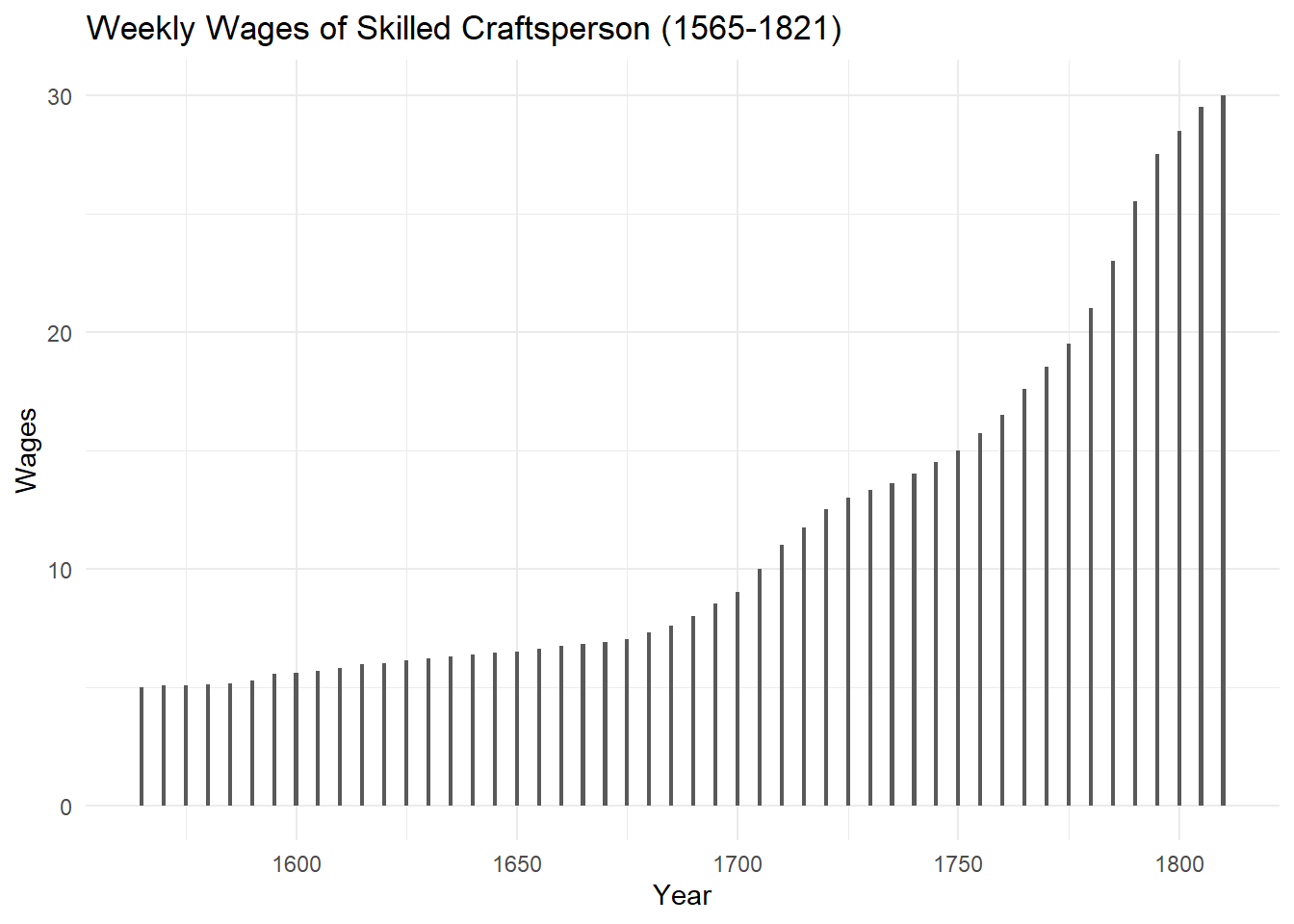

Playfair’s data on wages and the price of wheat sourced from the HistData package.

Affordable housing by town 2011-2023, published by the Department of Planning, Connecticut, United States (link).

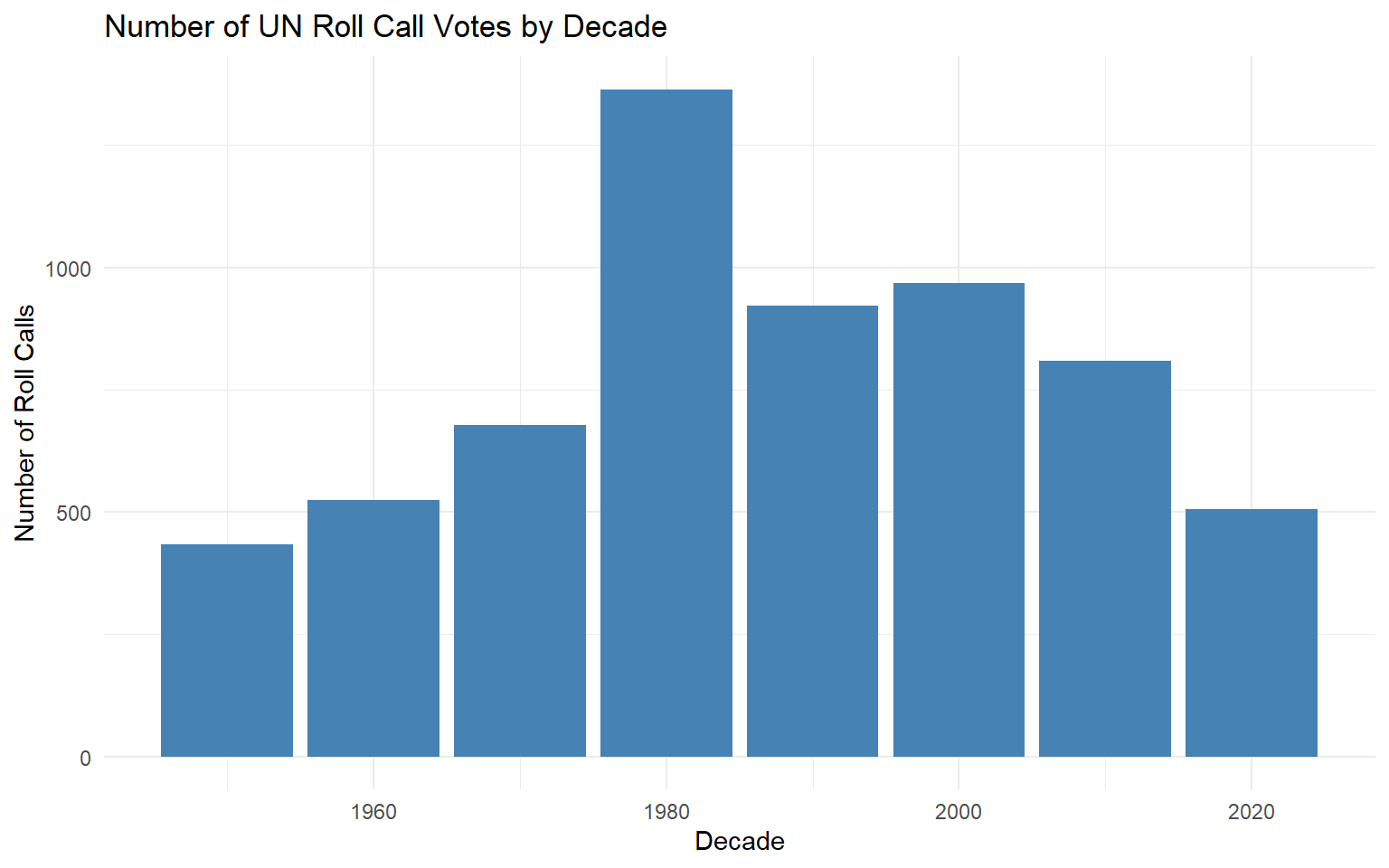

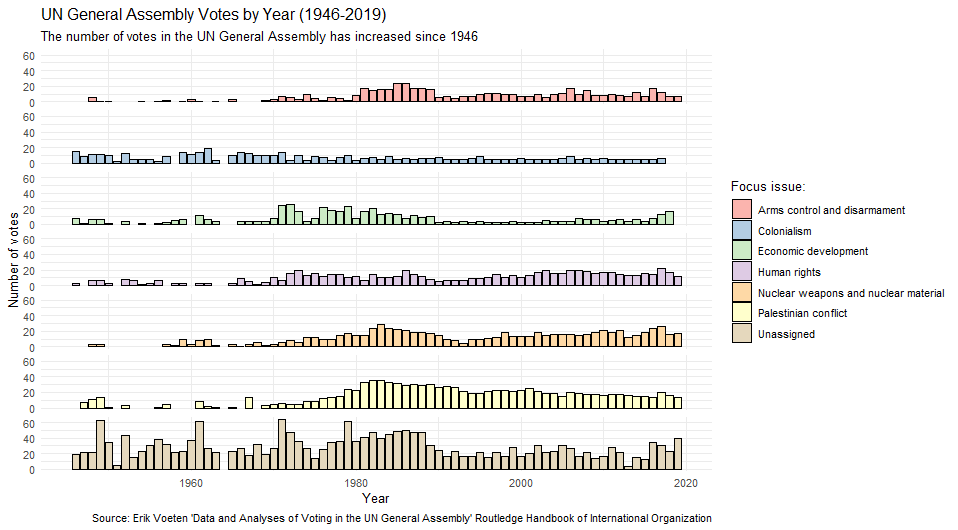

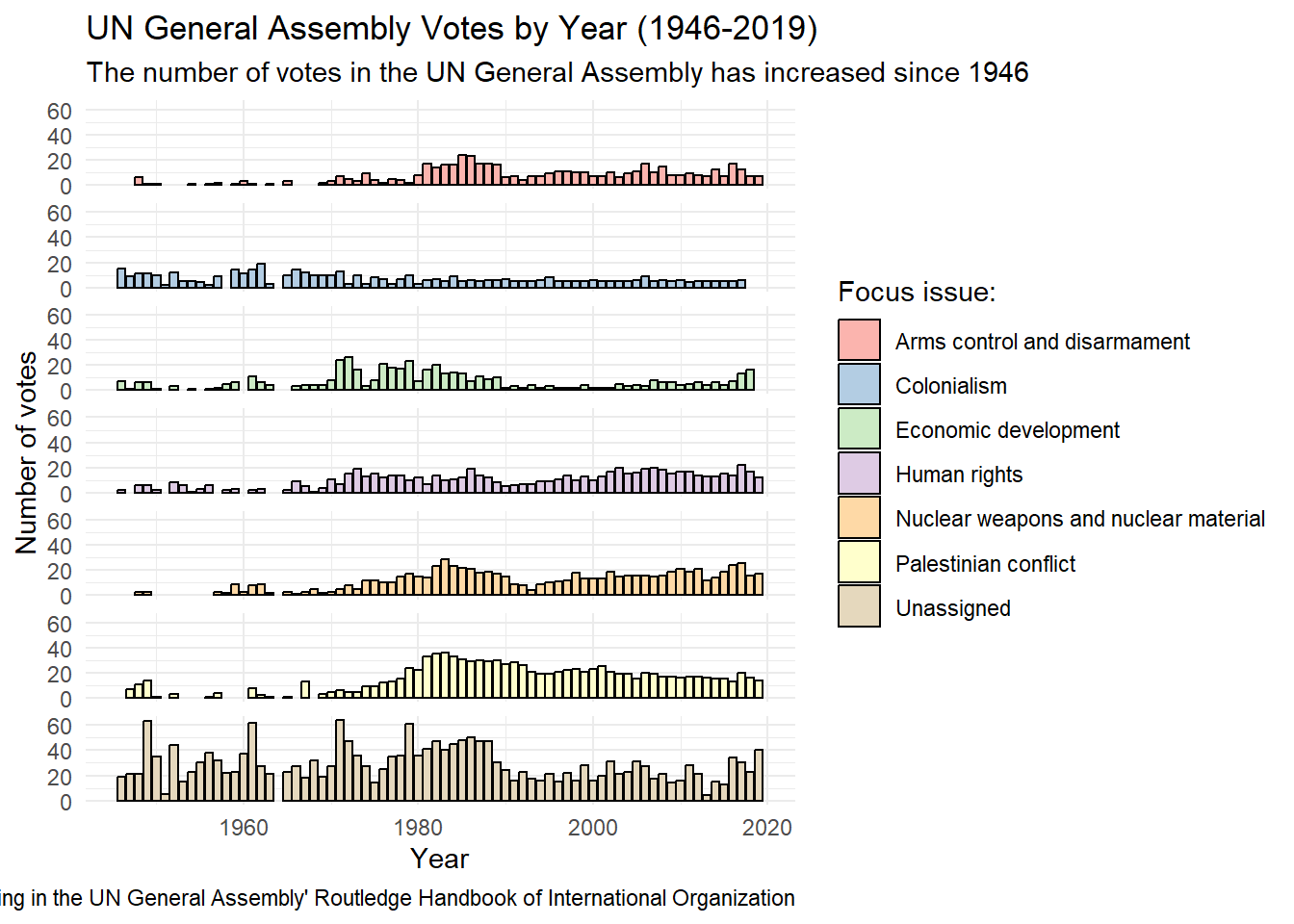

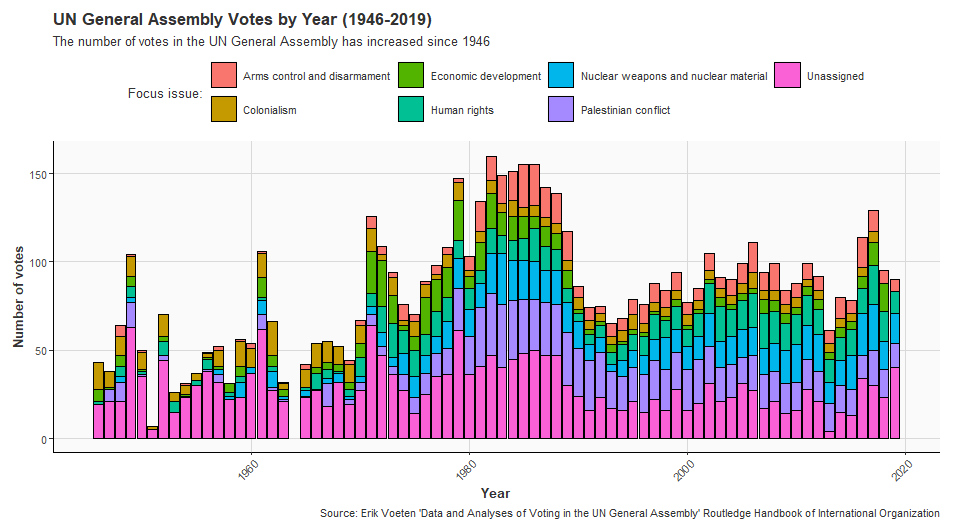

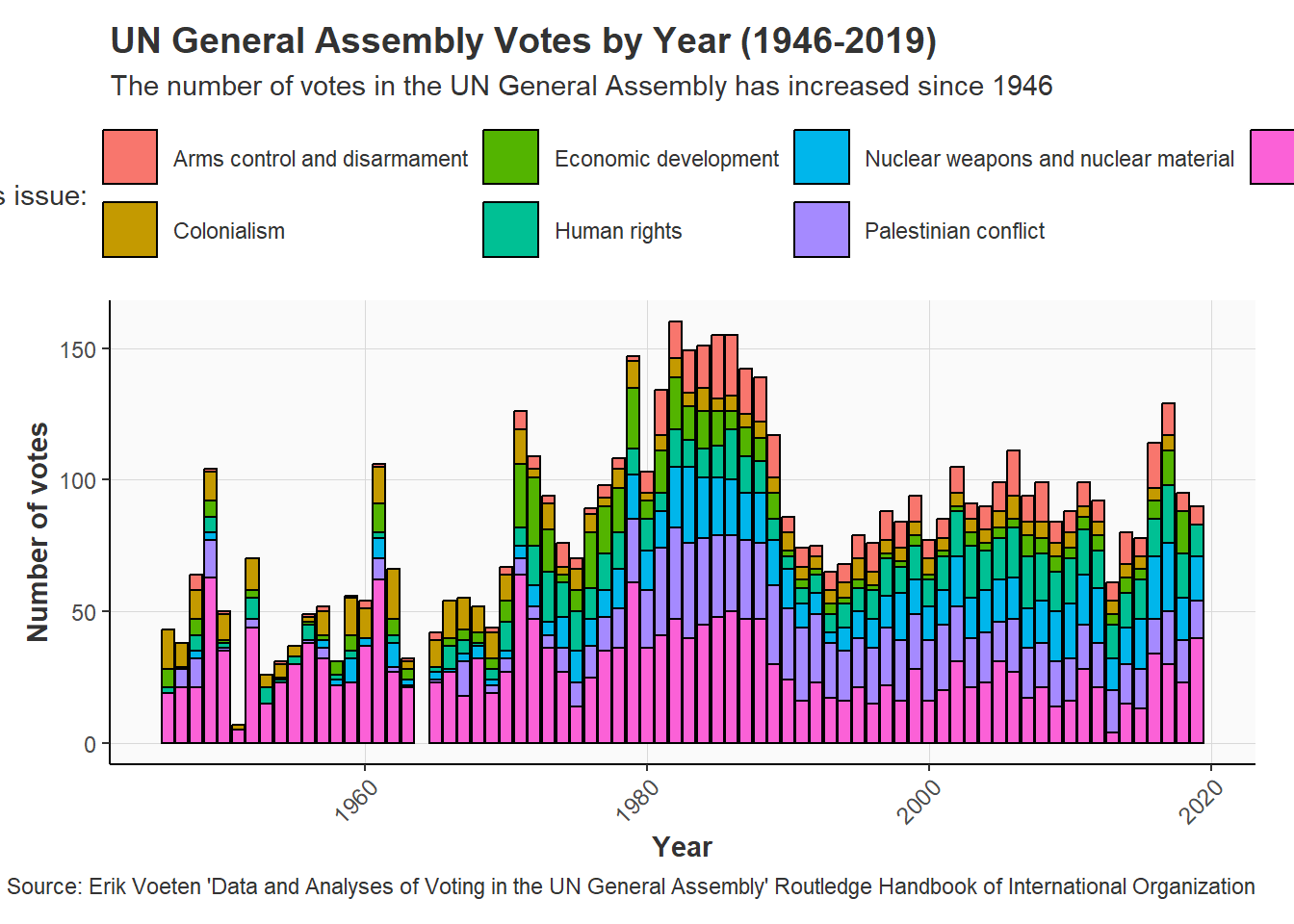

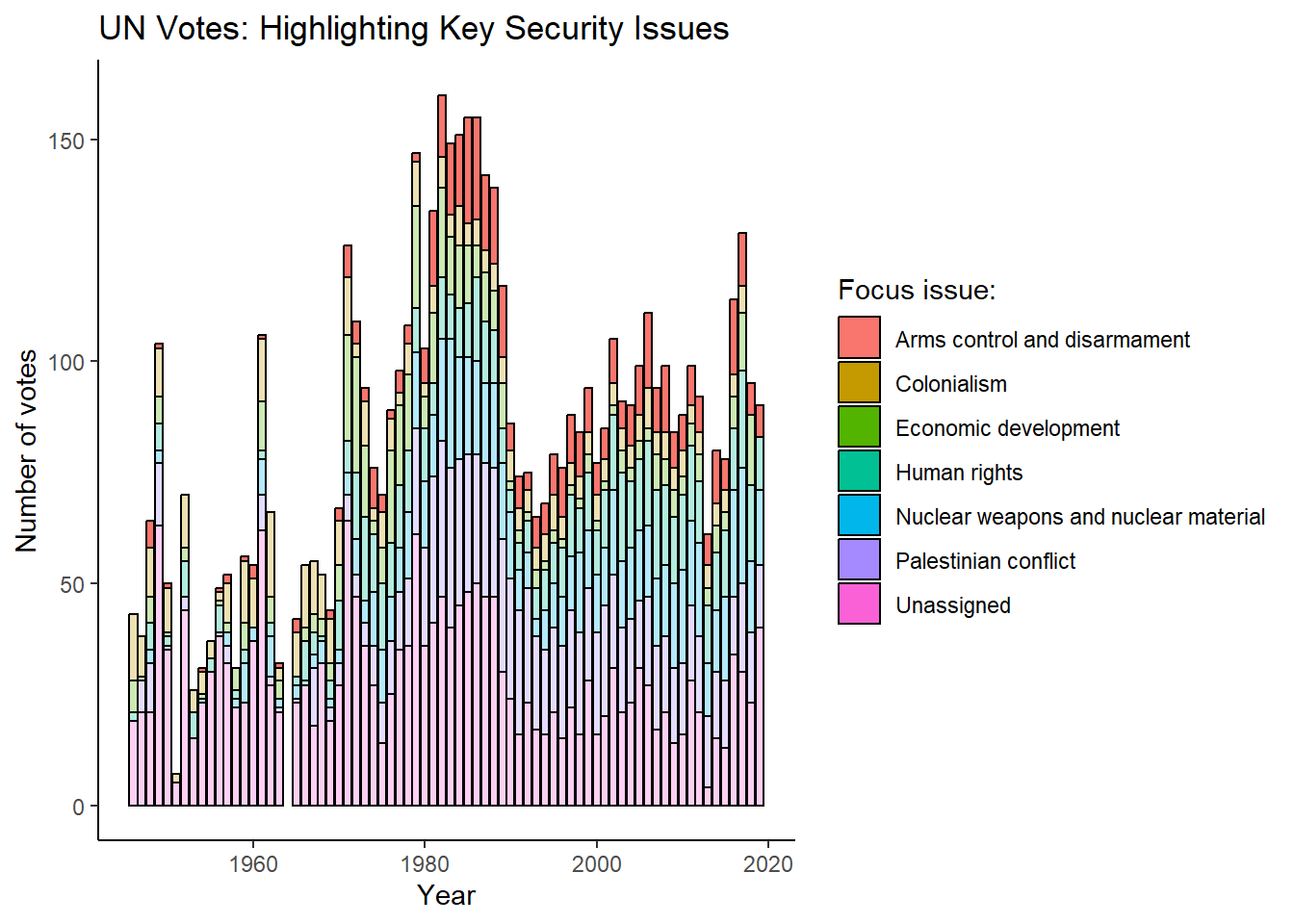

United Nations General Assembly voting data from the unvotes package based on Erik Voeten “Data and Analyses of Voting in the UN General Assembly” Routledge Handbook of International Organization, edited by Bob Reinalda (published May 27, 2013)

Additional Resources

Swirl: Learning R in R

Another great resource for learning R is the “swirl” package. which provides a set of interactive lessons that introduce the basics of base R within the R console.

Installing swirl: You can install and run the swirl package by following these steps:

Install swirl: Execute the command

install.packages("swirl")in the R consoleLoad the swirl package:

library(swirl)Run Swirl:

swirl()Select a course, and lesson to complete: once you’ve run the swirl package it will guide you through the process.

Note: swirl lessons can be really helpful for learning base R, but some of the lessons are no longer actively maintained. You can find out more about the swirl package here and errors that might arise via the github repository.

Programming for Public Policy Analysis

Learning Public Policy Analysis

Applied policy analysis operates under real-world constraints—limited budgets, tight deadlines, and imperfect information. Successful policy analysis is therefore the art of the possible as we do our best to balance analytical rigor with the data, resources and time available to us:

“…Decision makers usually operate within a tight time frame with inadequate resources and information. They are buffeted by special-interest pleading, bureaucratic imperatives, and political forces whose vision extends no further than the next election cycle (Dye, 1984). In such an atmosphere, greater technical expertise can play a role, but a significantly constrained one, at best…”

Source: Barkenbus, J., 1998. Expertise and the policy cycle. Tennessee: Energy, Environment, and Resources Centre: University of Tennessee. Retrieved March, 21, p.2016.

As a practitioner, I’m not blind to this reality. I’m also familiar with the elegant theories and neat approaches presented in textbooks having little to do with the realities faced by public policy professionals.

The conceptual frameworks and models presented in this book are therefore not designed to reflect reality, but help us think about an idea or problem. In the words of George Box, “All models are wrong, but some are useful”. This book uses models and frameworks for their utility, not because they’re right.

A large part of learning public policy is about doing it

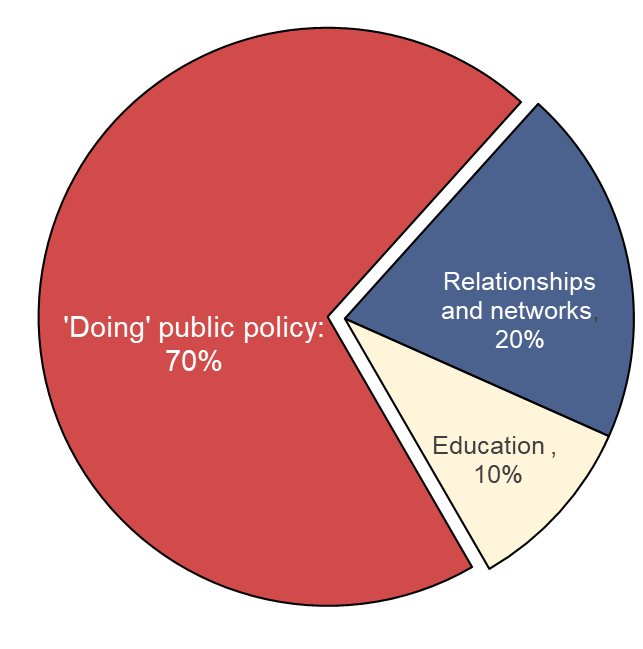

Based on: Ayres, R., Head, B., Mercer, T. and Wanna, J., 2021. Learning policy, doing policy: Interactions between public policy theory, practice and teaching (p. 352). ANU Press.

Although what this looks like in practice varies from job to job, my personal experience as an applied economist suggested this to be common. Yet, when writing this course I came across few resources from academia that provided an accurate (or useful) picture of what applied policy analysis looked like in practice. Most textbooks cover similar statistical techniques designed to answer narrow and well-defined questions using well-formatted datasets.

Policy analysis, it seemed, was a dark art that a person must be indoctrinated into and couldn’t be taught. In the words of one practitioner:

“…there is almost a wish to keep the policy process secret, the notion that it cannot be taught. This is something that you have to be anointed into; it is a different kind of knowledge”

Source: Mercer, T., 2021. What can policy theory offer busy practitioners? Investigating the Australian experience. LEARNING POLICY, DOING POLICY, p.51.

Although it’s not the intention of this book (or the associated course) to fill this gap, both have been designed with this in mind. With the focus questions, analysis techniques and datasets all being selected to better mirror the types of problems faced in real-world policy analysis.

The course is also structured around the application of simple principles and guiding questions that have been drawn from the experiences of real world practitioners from government, the private sector and academia.

Data and the Public Policy Cycle

- The ‘Policy Cycle’ describes how public policies and interventions typically move from conception to implementation. The table below shows each stage of this cycle and how data analysis can contribute strengthen policy-making at each stage:

| Policy Cycle Stage | Description | How Data Analysis Can Contribute |

|---|---|---|

| Emergence | Public policy issue(s) emerge | Identifying and quantifying the scope and scale of emerging public policy issue(s). |

| Agenda Setting | Identifying and prioritizing issues that warrant action by government | Using evidence to quantify issues, identify trends and prioritize potential public policy issues that require attention. |

| Policy Formulation | Developing possible solutions and specific plans | Modeling potential outcomes, comparing intervention options and estimating the cost of alternative policy interventions. |

| Implementation and Monitoring | Putting the policy into action and tracking progress | Using data to monitor progress, detect implementation challenges and/or monitoring uptake. |

| Evaluation | Assessing outcomes and determining if the policy achieved its goals | Evaluating what worked (or didn’t) once a policy has been fully implemented. |

From Spreadsheets to Scripts

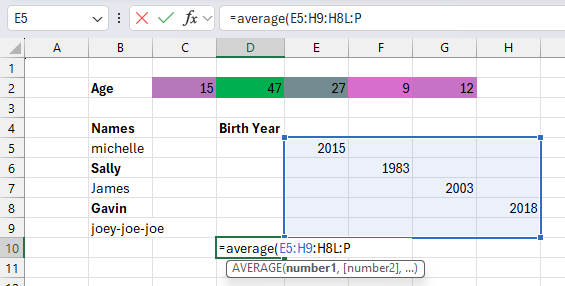

Most of us start working with data using a point-and-click tool like Microsoft Excel. Although point-and-click tools have their own learning curve, they are designed to work like most other consumer analysis software: you open some data, manually rearrange it to a format that makes sense and to conduct analysis by clicking on the tools presented to you.

Want to organize age, dates and names in a single column?

Go for it!

Interested in jazzing up your data by making it different shades of pink?

No problem!

Notice errors in your data?

Easy: Directly correct them and your boss will be none the wiser.

R is a stickler by comparison. While Excel lets you click, drag, and directly edit cells, R requires you to write commands for every action. R also expects your data is organized in a specific way, with columns of the right type and using the same boring color as every other variable. In R, you can’t just click a cell and change it, you need to make changes to your data using code, which can feel cumbersome for newcomers to programming.

When you’re first learning R, this can feel limiting, but each restriction serves a purpose:

Every action is visible: Your code shows exactly what you’re doing. Instead of hidden formulas and errors, code transparently documents each step you taken.

Consistency by design: Applying analysis to vectors, instead of cells, encourages analysis to be applied identically across a variable. This can make it easier to spot errors (as they will be repeated across the entire variable) and encourages us to development a consistent methodology.

Structure allows automation: Requiring that data is organized in a consistent way makes it easier to automate and streamline repetitive analysis, and

stealre-purpose old code for new projects.Reproducibility: Code creates a permanent record of each step in your analysis. Thereby making it easier to reliably reproduce and share your analysis with stakeholders, such as your colleagues and/or the public.

Why Should you R?

A key motivation for this guide comes from the myriad of opportunities for applying modern data science tools and technique to policy analysis. Whether you’re policy adviser working for government, a think tank, a consulting firm or a charity, being able to program in R holds a number of distinct advantages.

Key Advantages:

Automation: Although learning to program can present a challenge, by utilizing R in your work it’s often possible to automate and streamline repetitive data management and analysis tasks – saving time, money and allowing public policy professionals to spend more of their time making better public policies.

Reproducible Research and Transparency: Programming languages promote transparency and reproducibility in policy analysis by design. Helping to ensure your work can be validated, replicated and shared with others if the need arises (such as when you need to respond to information requests made by the public).

Data Visualization and Communication: R is famous for its ability to produce high-quality and visually compelling data visualizations. Making it easier to effectively communicate policy analysis and research to a diverse variety of audiences.

Advanced Statistical Analysis and Data Acquisition: In addition to R opening up opportunities for conducting advanced statistical analysis and modelling, its ability to work with a wide variety of data formats can open up new possibilities for sourcing data. Expanding what’s technically possible at each stage of the policy cycle.

Community Support: R has a large and active community. Meaning that there a large variety of packages, tools, frameworks and support groups readily available.

Cost: Commercial software often requires purchasing licenses, which can come at a significant cost. However, R is free – with the cost associated with learning how to use R easily outweighed by its power and flexibility.

When Should You R?

When we acquire a new skill, it’s common to see opportunities to use it everywhere — even when it’s not the best tool for the job. This is sometimes termed the law of the instrument and can result in an over-reliance on a new, fun and/or familiar tool.

R’s versatility makes it particularly susceptible to this bias. Maybe you’ve been asked to split the lunch bill and your first instinct is to calculate this via the R console. Or perhaps you need to send a set of transactions to your accountant, but you end up developing a regression model to forecast your monthly spending.

Whatever the problem, once you know how to program it can be tempting to over-engineer solutions to simple problems. Although this can be fun and a great way to get comfortable with programming, it can also waste a lot of time. All of a sudden a task that would take 2 minutes in a spreadsheet might consume an hour of our time and come with a variety of unanticipated costs, such as needing to produce documentation for colleagues and continually update the analysis over time.

Before tackling an analysis problem it can therefore be a good idea to spend a couple of minutes thinking through the problem and whether a programming language, like R, is the best tool for the job:

Should I Use a Programming Language?

| Theme | Questions to Ask |

|---|---|

| Data Format | Is the data large or complex enough to benefit from using more advanced tools? Is the data stored in multiple formats and/or locations? Would manual processing be error-prone or time-consuming? |

| Task | Is this analysis repetitive and likely to be performed again in the future? Can the process be broken into a set of logical, programmable steps?< Would automation save significant time in the long run? |

| Collaboration | Will collaborators still be able to contribute effectively? Would the reproducibility of code be an advantage? |

| Technical Requirements | Does the analysis require specialized statistical methods? Are advanced visualizations needed? Would the built-in documentation capabilities of a programming language improve clarity? |

In short, programming languages tend to be good for repetitive tasks that can be defined using a set of logical rules and formulas. However, sometimes simpler tools like spreadsheets can provide faster solutions for straightforward analyses that can be more easily understood and maintained by analysts that lack programming expertise

way, learning how to know when R is well-suited for a task rather than being computational overkill is a valuable skill in itself.

Core Tools

An Introduction to R

R and RStudio

R is a programming language and software environment specifically designed for statistical computing, data analysis and graphics.

RStudio is an integrated development environment (IDE) for R. In essence, RStudio provides a user-friendly workspace that makes working with data in R easier.

R is the engine that does the work we ask of it, while RStudio provides an accessible interface from which we can control it. Together, R and RStudio provide an analysis toolkit to make working with data easier and more efficient.

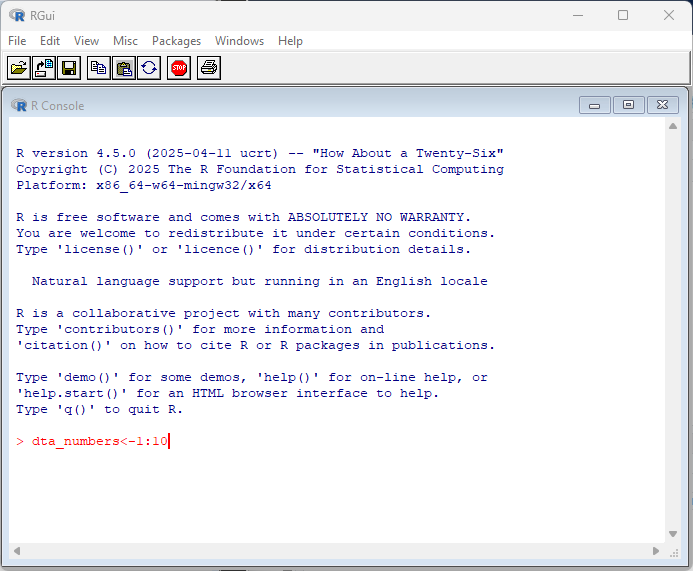

R

R can be installed on Windows, Mac or Linux by following the “Download and Install” links at https://cran.r-project.org/.

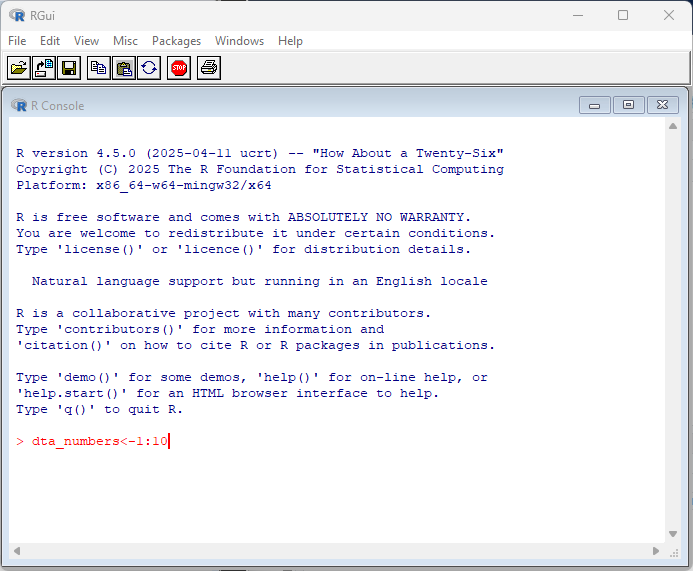

By default, R comes with a simple user interface called ‘RGui’ that provides a way to execute commands. For newcomers to the language it’s worth experimenting with simple commands and calculations. In the example below, dta_numbers<-1:10 creates an object called dta_numbers that includes the numbers from 1:10. You can try simple calculations like you would a calculator.

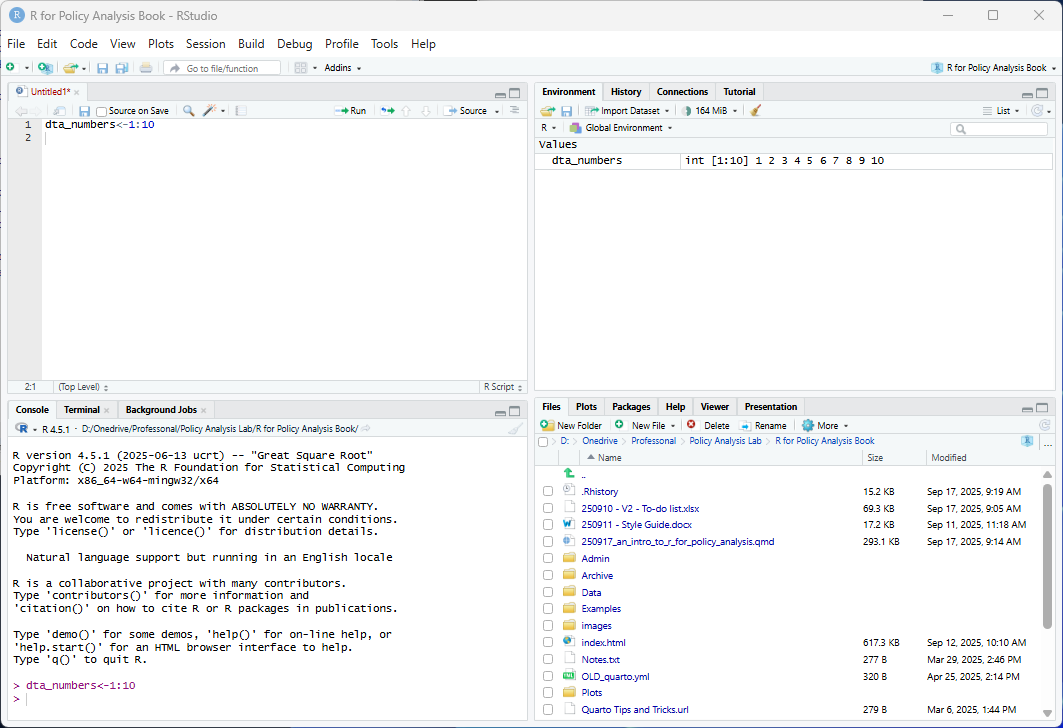

RStudio

R Studio is a more feature-rich IDE for interacting with R. RStudio can be freely installed on Windows, Mac or Linux via https://posit.co/download/rstudio-desktop/

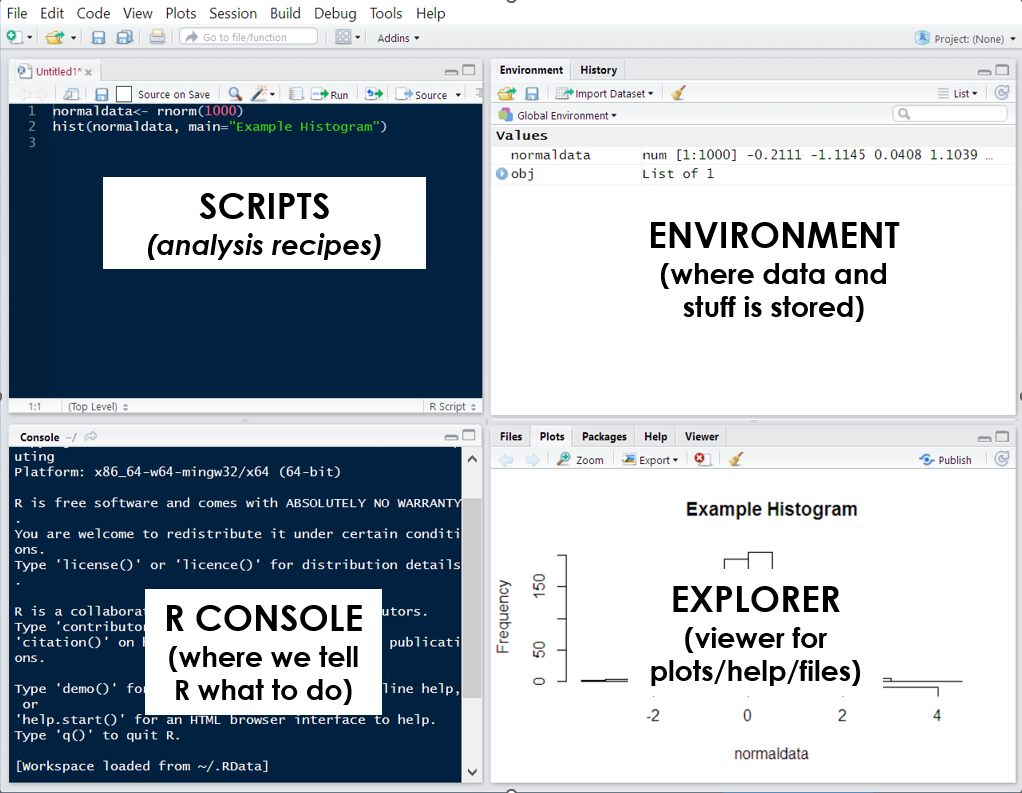

Like the RGui, RStudio is centered around executing commands through the R console. However, it also comes with a set of additional tools for working with R that are organized across four tabs (or panes), including the environment pane that presents objects you’ve created (such as dta_numbers), a pane with a preview of files in your working directory and a pane with a script where you can write code to have R execute.

The R Console

You might have noticed that both RStudio and RGui have a similar window that displays the current version of R you’re working with and some commands to get you started. This is called the R Console and serves as a command-line interface for interacting with R.

By interacting with R, I mean this in a practical sense as we’llfollow the same basic workflow when using the language:

R lets us know that it’s ready to be told what to do (with the

>symbol);We tell R what to do (by executing a set of commands); and

R follows our instructions and responds based on the result.

How R responds will depend on the command(s) we provide to it, but usually it will either return the results we’ve asked for or display an error message to signify that something went wrong. Sometimes R won’t provide a response when executing a command to avoid presenting too much unnecessary information. You can override this behavior by enclosing the command in print():. print(dta_numbers<-1:10)

When R displays the + symbol it means it’s waiting for more information, such as when it’s only provided half of a command.

If you’re new to programming, working with the R console can feel intimidating since it provides minimal guidance on what it is, or how to get started. My advice for newcomers is to spend some time experimenting in the console by executing commands in the console.

By ‘executing’ I just mean entering the code into the console and pressing enter.

If you’re using RStudio you can also execute commands via the scripts pane. Scripts are essentially text files made up of commands and notes that simplify the process for creating analysis recipes. You can run commands in a script by selecting the code you’d like to run in the script pane and selecting ‘Run’. Alternatively, you can place your cursor at the end of a line code in the script and press Ctrl+Enter (command + return for MacOS).

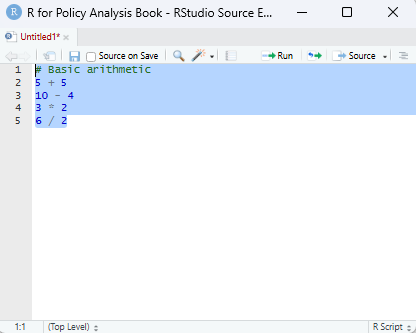

Whether you execute commands directly or via a script the results will be presented in the console. As a start, try copying the commands (or expressions) below and executing them in the R console:

# Basic arithmetic

5 + 5 [1] 1010 - 4 [1] 63 * 2 [1] 66 / 2[1] 3When executing the above commands you’ll notice that R will return a result, just like a calculator. However, we can also use functions and operators to have output more useful information, such as a summary statistic based on data we give provide it:

#generate some numbers

1:10 [1] 1 2 3 4 5 6 7 8 9 10# calculate the mean of the numbers 1 to 10

mean(1:10) [1] 5.5In all of the examples above, R automatically executes the instructions provided and prints the results in the console without saving them. But, if we wanted to store them for later use we could so using the ‘object assignment’ operator ‘<-’:

# Object assignment

x <- 1:10

y <- c(1, 2, 3, 4, 5)

# Storing text strings

names<- c("Chris","Mary","Shazza")If you run the code above you’ll see that R doesn’t return a result. Instead, it silently creates named objects. Notice that in the first line of code the assignment operator <- assigns the numbers one to ten to an object named x.

The code below provides a simple demonstration of how to can be useful in practice. Notice that now that x and y exists, we can refer to them directly in our commands.

# Return the contents of an object names # Object manipulation

x + y [1] 2 4 6 8 10 7 9 11 13 15Once you’ve run the code, observe how R adds the vectors element by element. The first value of x and the first value of y are added together to produce 2, the second values combine to make 4, and so on through each position in the two vectors. This behavior reflects the fact that R is vector-based, which means operations are peformed across an entire vector number-by-number (or element-by-element).

Note: When vectors of unequal length are combined in an operation, R ‘recycles’ the shorter vector by repeating its elements to match the length of the longer vector. In the example above, elements from y are recycled when adding it to x.

Note: If the length of x was not a multiple of y, R will usually not complete and and present a warning.

# Using a function to calculate the mean

mean(y)[1] 3Finally, in the code below we’ve taken the numbers in the object ‘y’ and provided them to the mean() function. You can read what the mean function does by typing ?mean in the console, but as you might of guessed it has taken the contents of y and calculated the arithmetic average.

Notice that the basic workflow is that we give R some information, such as a set of instructions on what to do and R tries to execute what we’ve asked. Ask R to subtract 4 from 10 and it will give us the number 6. Tell it to assign the numbers 1 to 5 to an object called ‘y’ and it will silently store those numbers as an object. In short, R takes a set of inputs, processes them and returns a series of results that are (hopefully) useful to us.

RStudio

RStudio is an integrated development environment (IDE) designed to help us use R effectively. By default, RStudio is divided into four separate windows (or ‘panes’) that provide a set of tools and functions to help us work with R:

Script Editor: At a basic level, the script window is just a fancy text editor where you can write your code. When you click the ‘Run’ button at the top of the pane, RStudio will send selected code to the R console to be executed.

The R Console: This is where we interact with R: either by directly typing our commands in the console or having RStudio do it for us. When running code from our analysis script, this is where the code is sent to be executed. This is also where information is displayed by, such as results of our code, status messages and error messages.

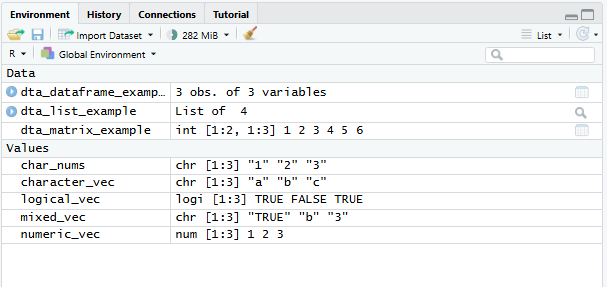

Environment and History:

Environment: presents what ‘objects’ (such as data) have been loaded into the R environment. For instance, after executing dta_rnorm<-rnorm(100) the object ‘dta_rnorm’ will appear here.

History: provides a history of commands sent to the R console.

Files, Plots, Packages, and Help:

File: A simple file explorer.

Plots: where plots and other outputs will be presented by RStudio.

Packages: a list of installed packaged (note loaded packages are ticked)

Help: An interface for searching and viewing help files.

Useful options

Dark mode: you might notice that a lot of my screenshots from RStudio are in dark mode. I use the Cobalt theme. You can change the theme of RStudio via ‘Global Options’ > Appearance’ > ‘Editor theme’.

Base pipes: Most of the time I’ll use base pipes (|>) rather than magrittr pipes (%>%) in my code. You can enable this as the default in RStudio via ‘Global Options’ > ‘Code’ > ‘Editing’ > ‘Use native pipe operator’.

Code wrapping: you can enable the soft-wrapping of source files to make it easier to view long lines of code. This option is available via ‘Global Options’ > ‘Code’ > ‘Editing’ > ‘Soft-wrap source files’.

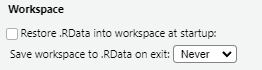

Start from a clean slate

When you first exit R, you’re likely to be asked whether you’d like to save a copy of your workspace, which contains a copy of objects you were working with.

Don’t.

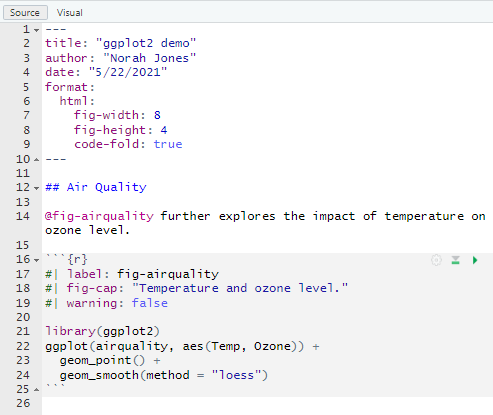

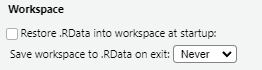

In fact, one of the first settings I recommend new users implement is to disable restoring and saving your workspace in RStudio via Tools > Global Options > General: Workspace

The idea behind this is to encourage us to outline every step of an analysis recipe in our scripts. Encouraging you to create logical and well-documented scripts that provide a reproducible record of the data used, how it was cleaned and how results were produced. In addition, to encouraging reproducibility in our work it also reduces the risk of carrying forward errors from previous sessions, such as actions that we forgot to save in our script. Ensuring not only that our recipe works the same way each time its run, but that fatal errors are spotted and dealt with more quickly.

Foundations

Thinking Like A Programmer

Using point-and-click tools for data analysis follows a similar pattern: you have a question to answer, some data to answer it with, and a collection of tools presented to you that you can interact with. Just like how we interact with the world around us, you’re presented with a series of visual queues about the consequences of each action you take. Analyzing a dataset then becomes a matter of clicking the right tools in the right order and observing the results.

Programming languages like R work a little differently. Instead of having a collection of tools presented to you, you’re provided with an empty text box where you can tell the computer what to do. There’s no icons to click, menus to navigate or documentation presented to you. Instead, you’re expected to enter the right commands in the right order and ask for visual queues when you need them.

Analyzing data using point-and-click software is like ordering from a menu — the options and their ingredients are chosen for you. Whereas programming is like cooking for yourself — you choose the ingredients and how to cook them.This difference presents a fundamental trade-off: point-and-click software sacrifices flexibility and control for ease of use: programming sacrifices ease of use for flexibility and control.

For those that regularly work with data, R provides invaluable power and flexibility: offering an almost unlimited array of tools that can be applied in whatever way makes sense for your data. Need to import and clean data stored in hundreds of excel files? R can help with this. Interested in transforming your statistical modelling into an interactive dashboard? R has you covered there too. But, there’s a trade-off: learning to program requires that getting comfortable writing your own recipes rather than ordering from the menu.

The Iron Chef: Teaching Robots to Cook

To take the metaphor further than I probably should: think of writing code as creating a recipe for a robot chef. Robots are great at math and following instructions, but they can’t taste or interpret ambiguous instructions. Provide a vague cake recipe to a human and they’ll likely figure it out. Provide it to a robot chef and they might set the kitchen on fire.

This is because computers are incredibly fast, but purely literal, machines. They excel at processing vast amounts of data and performing calculations at speeds far beyond human capability. However, they lack the human ability to read between the lines and intelligently respond to ambiguity. Tell a computer to do the wrong thing and it will just do it really quickly.

Writing code therefore means leaving nothing to chance. We need to provide the computer with the right ingredients, in the correct format and provide it with a precise set of instructions in the in the correct order.

This can feel daunting as you’re not only learning a new language, but the logic that dictates how it functions.

The good news is this that you’ve done this before. None of us are born knowing how to talk in our native tongue. Instead, we learn by watching and mimicking those around us. Learning to code is similar. We spend time mimicking others, troubleshooting errors and practicing the basics until we’re armed with a large enough vocabulary to write the recipes we need.

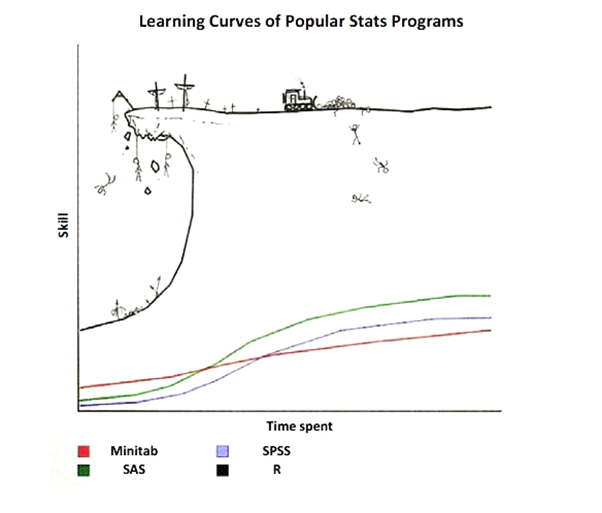

The journey from confusion to competence follows a predictable arc and is worth scaling. Start with the basics: import some data, apply a simple function and create a terrible graph. Encounter an error? Figure out its source, why it occurred and how to avoid it next time. Step by step, experiment, make mistakes, and celebrate each victory on your journey to master R.

Some Basic Architecture

Although working with R is the best way to learn it. There are some basic concepts that it’s worth becoming familiar with before you start. We’ll cover each of these in greater detail throughout the book, but I describe the basic building blocks as being divided into three themes:

Language: How you talk to R (e.g. operators, commands and functions).

Objects: How R organizes data and information (e.g. dataframes, variables and lists).

Software: How you interact with R (e.g. RStudio, the console and scripts).

Language

Think of R as a language for talking to computers about data. Like any language, R has its own symbols, words and grammar that need to be applied to communicate correctly:

Operators are R’s most basic vocabulary: a set of single symbols that perform actions:

Math operators are used for basic arithmetic ,such as

2 + 3,10 - 5,4 * 2and8 / 2Assignment operators store values.

dta_my_data <- 100saves the value 100 to an object called dta_my_data.Comparison such as

5 > 3which tests whether 5 greater than 3.

Expressions (or commands) are a set of complete instructions for R to follow. When you type 2 + 3 into the console and hit Enter, R will return 5.

Objects

Objects are containers that R uses to hold information. When we execute dta_my_data <- 100 R creates an object with the name dta_my_data. Functions, such as mean() are also objects, except they contain a set of instructions that R can follow:

Functions are a collection of commands that handle more complex tasks. Instead of writing 50 lines of code to calculate an average, you use the mean(), or plot(x, y) can be used to create a graph.

Packages expand the functionality of R. Each package includes a collection of objects, such as functions and data, designed to make analysis and visualization easier. At the time of writing there were more than 22,000 packages available on CRAN. Packages can be installed using install.packages("package_name") and loaded using library(package_name).

Data Structures are containers that organize your information in R:

Vectors store multiple values of the same type e.g.

ages <- c(25, 30, 22, 45)creates a vector of ages.Dataframes organize data across rows and columns (like a spreadsheet). Each column has its own name and can be thought of as a single vector (or variable).

Software

We’ll be using specific software to interact with the R language, such as RStudio. Think of these as ‘helpers’ to work with R separate to the language itself:

RStudio provides a user-friendly workspace with panels for writing code, viewing results, and managing analysis.

Scripts are text files for writing code. RStudio has a script pane for authoring scripts.

Base R

By default R comes with a set of tools and functionality to let you immediately work with data, such as simple data structures, and functions to manipulate data, calculate summary statistics and produce plots. This default functionality is referred to as base R.

I don’t think anyone actually believes that R is designed to make everyone happy. For me, R does about 99% of the things I need to do, but sadly, when I need to order a pizza, I still have to pick up the telephone.

Roger D. Peng

Base R provides the foundation for everything you’ll do in R. While you’ll often add specialized packages to help with specific tasks, these additions always build on base R’s core capabilities. When somebody describes their analysis as being written in base R they mean it solely relies on the functionality that comes with R when it’s first installed.

You can read more about the design of base R here.

Note: While base R remains mostly stable across versions, code written for R 4.4 may not work in R 2.1 due to some changes in default behaviors and functionality over time. This is why it’s important to document the version of R (and R packages) you’re using when writing analysis.

Installing and Loading Packages

Packages can extend the capability of R by providing access to additional commands and functions to base R. Although you can install packages from a variety of sources, like GitHub, by default R installs packages from CRAN (the Comprehensive R Archive Network), which is a central repository of R packages. You can search for packages by name or topic at r-packages.io and r-universe.dev.

Use the install.packages("Package_Name") command to install a new package in R. Once a package is installed you can load it using library(Package_Name). It’s also possible to explcitely utilize a function without loading the package using package_name::function_name().

Packages can also be installed, loaded and updated in the Packages tab in the explorer window of RStudio. Packages with a tick next to their name have been loaded. Click  to check for package updates.

to check for package updates.

Most packages will come with documentation that explains its purpose and how to use individual functions. To access a package’s documentation you can execute help(package= "package_name") in the console. For instance, help(package = "base") will display the documentation for the base package.

The tidyverse: a Modern Analysis Toolkit

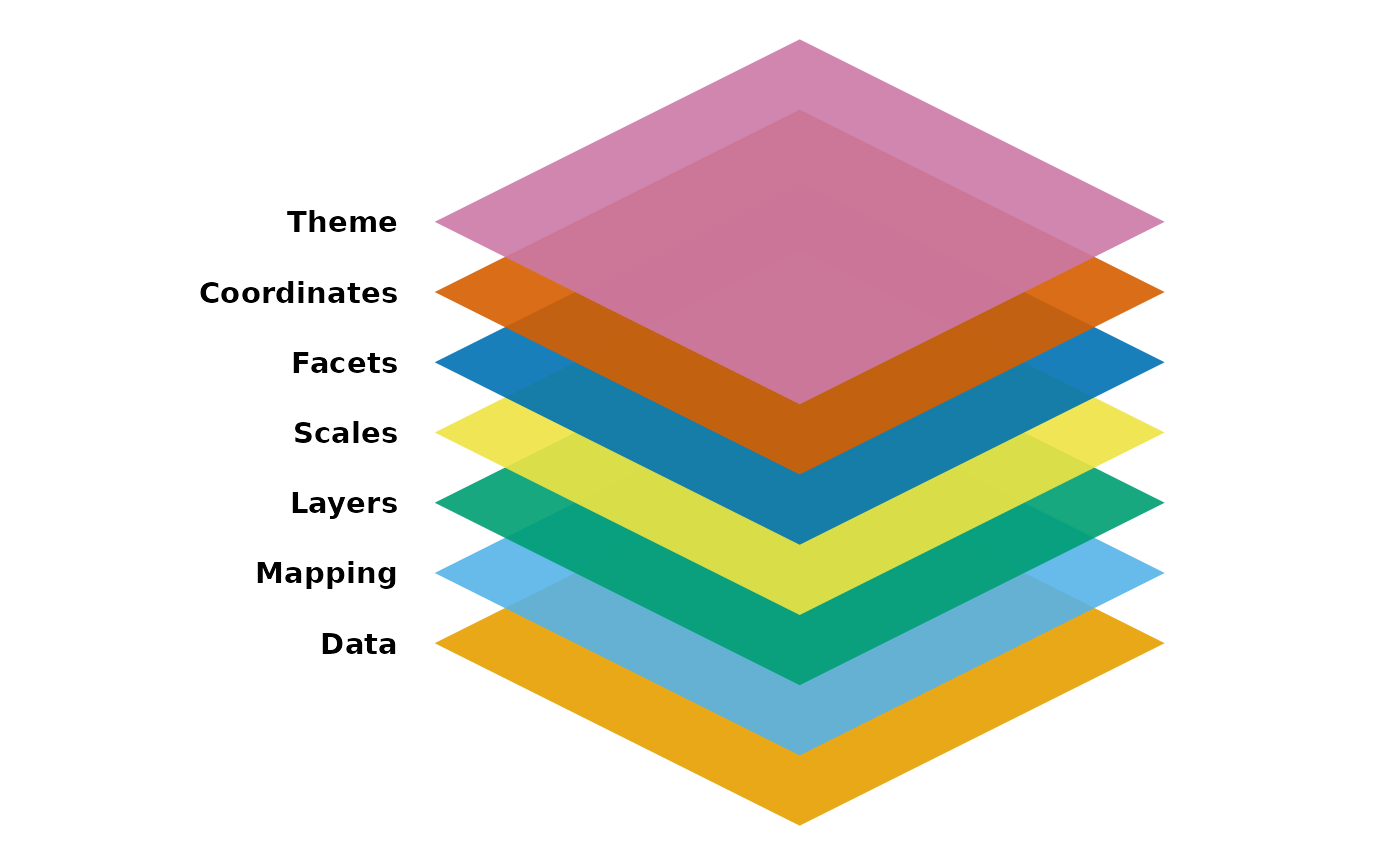

The tidyverse is a collection of R packages designed to make working with data simpler. Aside from including a collection of tools to make data science easier, packages in the tidyverse are also designed around a common set of principles and grammar to make it easier to use. For these reasons, we’ll primarily be using packages from the tidyverse in this guide, including:

- dplyr: Provides a set of tools for filtering, selecting, mutating, and summarizing data (link).

- forcats: Helps with the creation, manipulation and analysis and of factors, which are useful for categorical variables (link).

- ggplot2: Implements a consistent ‘grammar’ for producing high quality statistical visualizations (link).

- lubridate: Makes managing dates and times easier (link).

- purrr: Provides a set of tool that simplify functional programming, such as completing the same operation across multiple datasets without re-writing the same code (link).

- readr: Facilitates efficient importation of rectangular data formats (link).

- stringr: Includes a set of functions to help with managing and manipulating string, such as text data or information stored as text (link).

- tidyr: Includes data reshaping tools to assist with data wrangling tasks, such as reshaping (link).

To install the tidyverse in R you can execute install.packages('tidyverse') in the R console. Once the tidyverse is installed you’ll need to load the package to work with them using library(tidyverse).

Other Packages

Although the tidyverse will do a lot of the heavy lifting in this guide, we’ll also be drawing on other packages available on CRAN, including:

HistData: which provides a set of interesting datasets (link).

janitor: To help us examine and clean data (link).

readxl: Designed to help with reading data from Excel files (link). Although this package is part of the tidyverse, it will need to be installed and loaded separately to be used.

skimr: provides a set of simple summary functions for exploring data (link).

wbstats: For downloading data from the World Bank (link).

Why Learn Base R?

If the Tidyverse is So Great, Why Bother with Base R?

Although this guide will mainly focus on using tools available in packages from the ‘Tidyverse’, becoming familiar with base R holds a number of advantages:

Conceptual understanding: Base R provides much of the logic and/or functionality used by the 20,000+ R packages currently available (including the tidyverse!) so should form a backbone of learning how to use R.

Collaboration and communication: Base R is frequently used by others in the R community. Meaning that understanding base R will make it easier to find help and practical examples when trying to conduct analysis and troubleshoot problems. -

Flexibility and interdisciplinary: As base R provides the basis for a large number of packages, being comfortable with base R will make it easier to draw on tools offered by packages outside the tidyverse. Providing access to specialized functionality like geo-spatial mapping, building machine learning models and producing interactive web-based dashboards.

Speed and convenience: In some cases base R can be be easier, faster and more efficient than the tidyverse alternative.

Objects

Objects serve as containers that hold information and data. Each object type has its own format and set of attributes that can make it well-adapted to some tasks, but not others. Some common object types include:

Vectors ~ an ordered collection of values of the same type

Matrices ~ multiple columns of values of the same type

Data Frames (or Tibbles) ~ one or more columns of any element class

Lists ~ Collection of objects, such as more than one dataframe

Functions ~ R code providing a set of instructions to fulfill when called

To create an object, we can use ‘<-’. To delete an object you can use rm(object_name). In the example below we’ve created three vectors, a dataframe and a simple function. These objects are then deleted using rm().

#create vectors

dta_age <- c(34,42,19)

dta_names<-c("Mary","Kelvin","Susan")

dta_female<-c(TRUE, FALSE, TRUE)

#create a dataframe

dta_class<-data.frame(dta_names,dta_age,dta_female)

#create a function

fnc_count<-function(from=1, to=10) {from:to}

# delete the objects

rm(dta_age,dta_names,dta_female,dta_class,fnc_count)Note: While = can be used for assignment, <- is preferred. This is because ‘=’ has a lot of other uses, such as specifying the value of arguments in functions, creating variables and testing logical conditions.

Functions

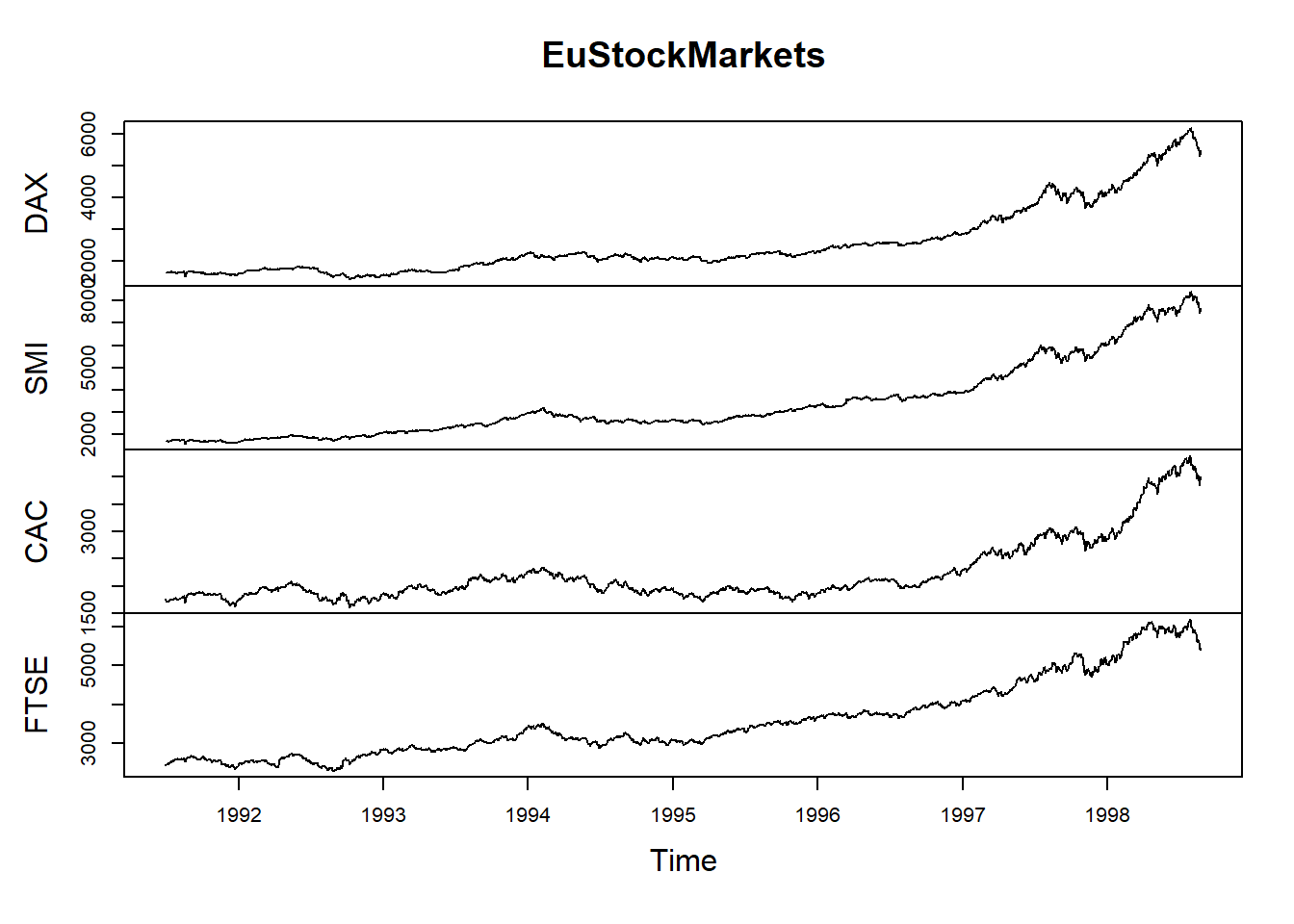

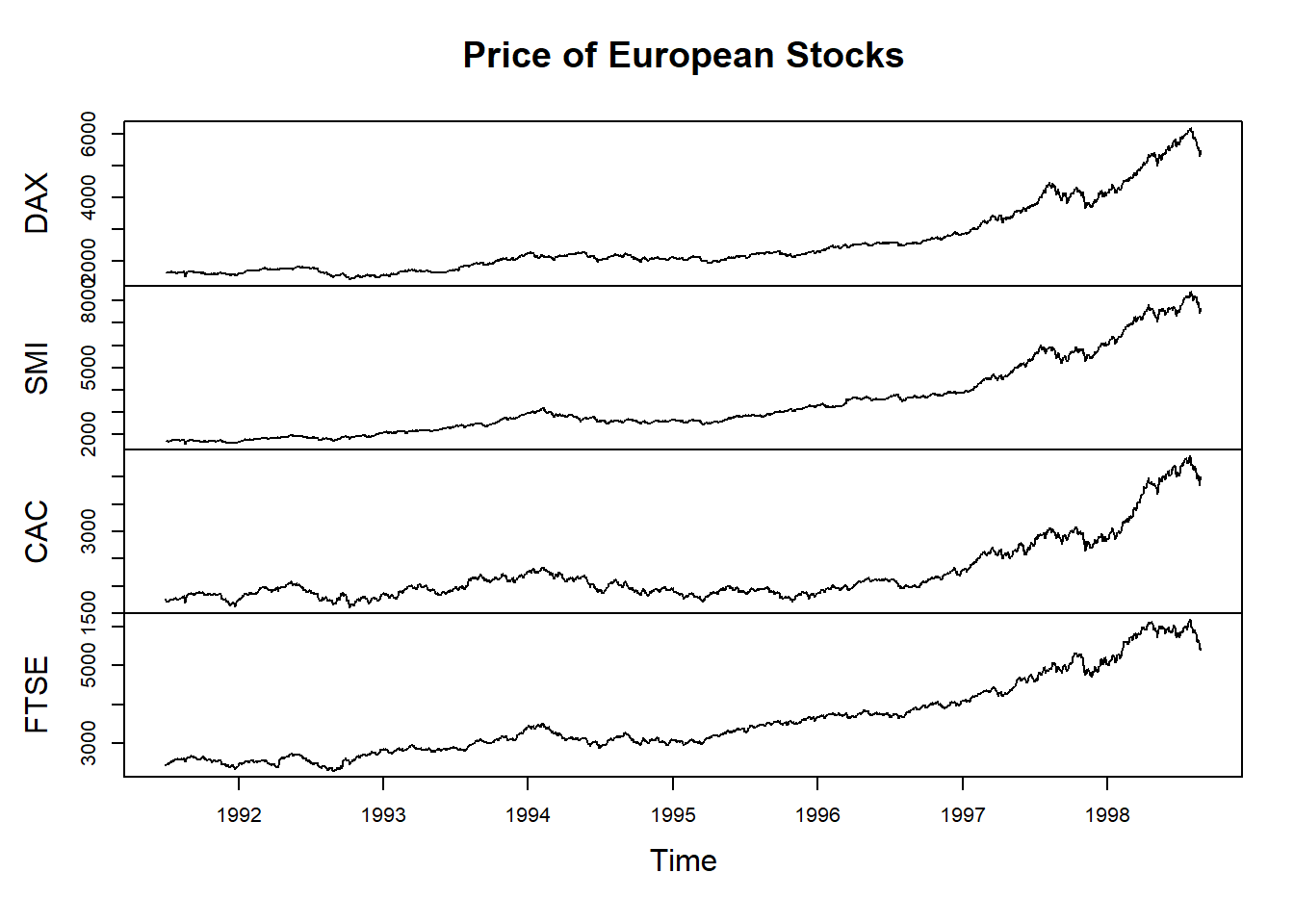

Functions are reusable pieces of code that complete a bundle of task based on the ingredients provided to it. A function will normally have a name which allows us to all upon it in R, like max(), summary() and table() and a set of parameters that can be used to customize its behavior. For instance, in the code below plot() uses the data called EuStockMarkets to produce a plot. The parameter main is then used to let plot() know what the title should be.

#take a look at our ingredients (the data):

head(EuStockMarkets)Time Series:

Start = c(1991, 130)

End = c(1991, 135)

Frequency = 260

DAX SMI CAC FTSE

1991.496 1628.75 1678.1 1772.8 2443.6

1991.500 1613.63 1688.5 1750.5 2460.2

1991.504 1606.51 1678.6 1718.0 2448.2

1991.508 1621.04 1684.1 1708.1 2470.4

1991.512 1618.16 1686.6 1723.1 2484.7

1991.515 1610.61 1671.6 1714.3 2466.8#produce a plot with the data:

plot(EuStockMarkets)

#produce a plot with the data while specifying the title to use:

plot(EuStockMarkets, main="Price of European Stocks")

Functions are the essential tools for working with data. Much of programming involves figuring out which functions to use, in what order and with which ingredients. Sometimes a function expects that we provide it with a collection of numbers, sometimes it expects a data table or maybe it is designed to adapt based on the ingredients provided to it.

Documentation

Almost all functions come with in-built help that explains what a function does and how to use it. To access a function’s help you can execute the function name with a ? at the front in the console. For instance, executing the command ?summary() will display the help file for the summary() function.

Don’t worry if the in-built help seems confusing. It will become more and more useful as you become comfortable with R.

Parameters and Arguments

We’ll sometimes use the terms parameters and arguments when talking about functions:

- Parameters are named options that define how a function behaves. For instance, the

mainparameter inplot()can be used to specify a custom title for a plot. - Arguments are the values provided to a parameter. In example above,

"Price of European Stocks"is the argument specified for the parametermain.

Data Structures

Vectors

Vectors are collections of elements of the same data type, such as numeric values, character strings, or logical values. When working with dataframes, each column (or variable) is essentially a vector that stores a specific type of information, such as a collection of names, ages, or whether or not members of the public are eligible for a grant.

In the example below we’ve created a numeric, character and logical vector.

#create vectors

dta_age <- c(34,42,19)

dta_names<-c("Mary","Kelvin","Susan")

dta_female<-c(TRUE, FALSE, TRUE)Common vector classes include:

Character (chr):

c("Ben", "Sheryl", "Bazza")Numeric (num):

c(1.2, 45.0, 21.6)Integer (int):

c(11, 47, 62)Dates (Date):

"21-12-03 UTC"Logical (logi):

c(TRUE, FALSE)Factors (Factor):

factor(x=c(1,1,0), c("Manual"=0,"Automatic"=1))

Because vectors store information in a single class, R will try to ‘coerce’ elements into a single class that avoids losing information about the value. In the example below, the source values are coerced to the character class to avoid losing information.

dta_pizza<-c(TRUE, "Maybe", 4)Matrices

Matrices a two-dimensional collection of elements of the same type (e.g. numeric, character, logical etc).

# Create a matrix

dta_m <- matrix(1:9, nrow = 3, ncol = 3)

dta_mDataframes

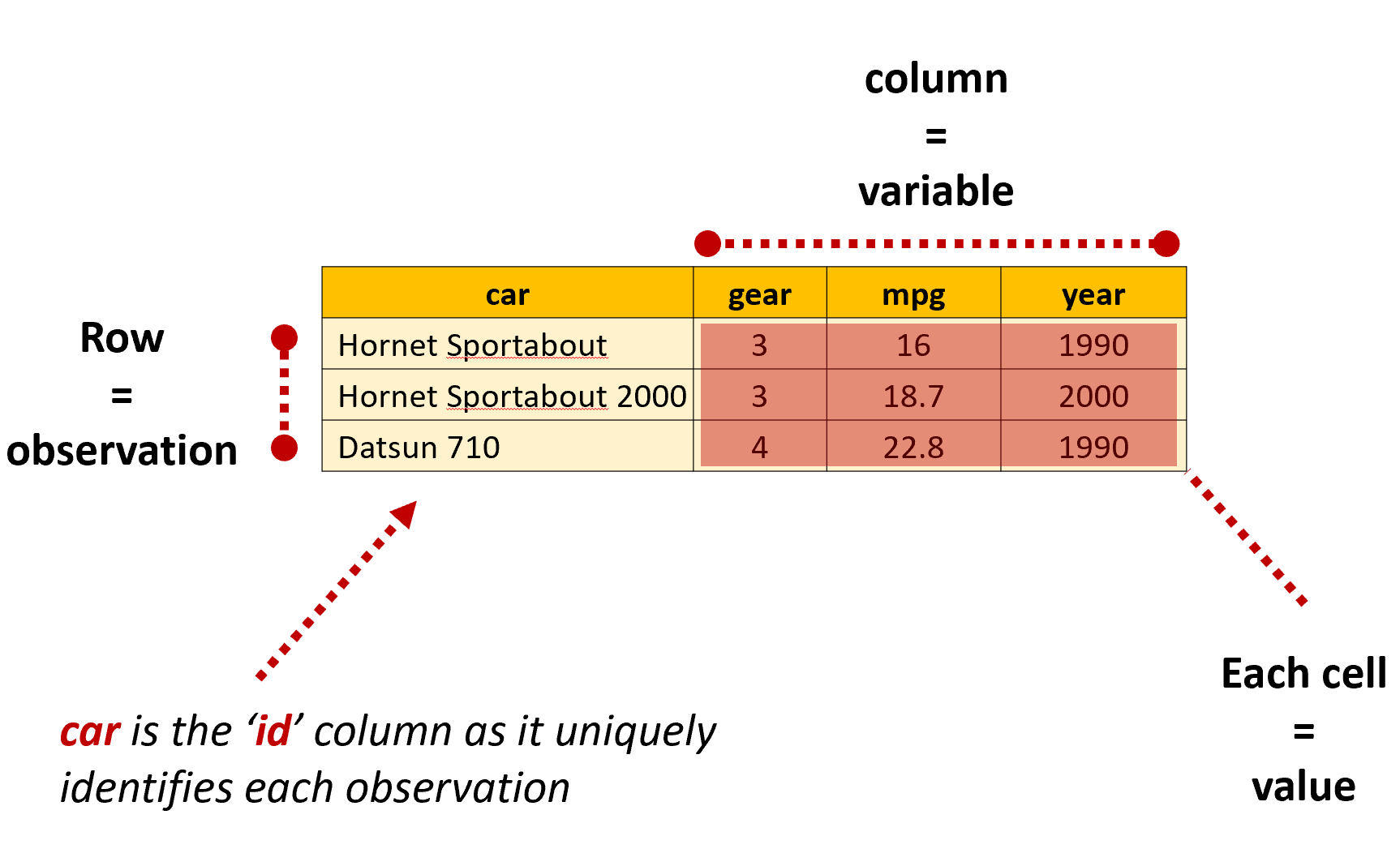

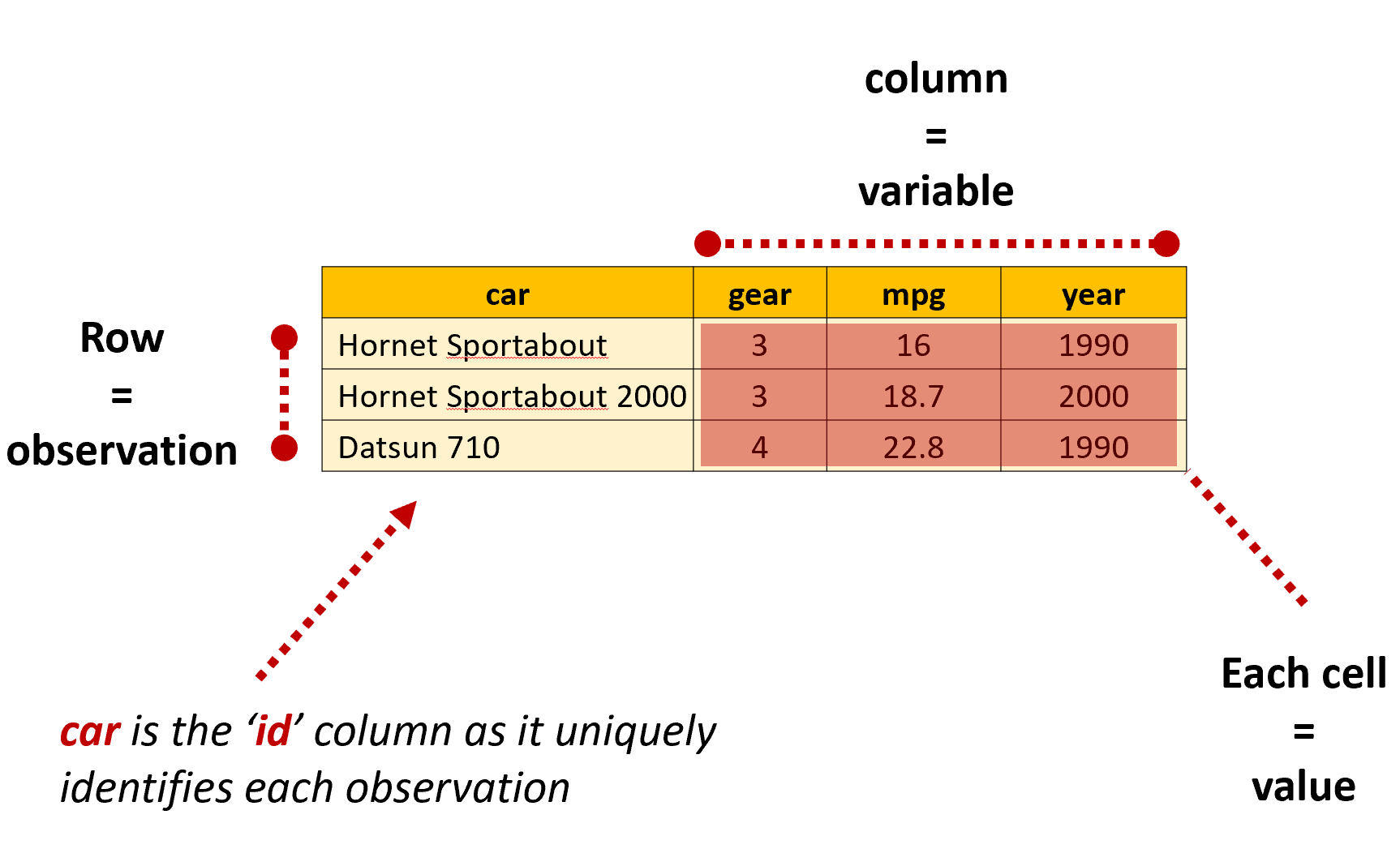

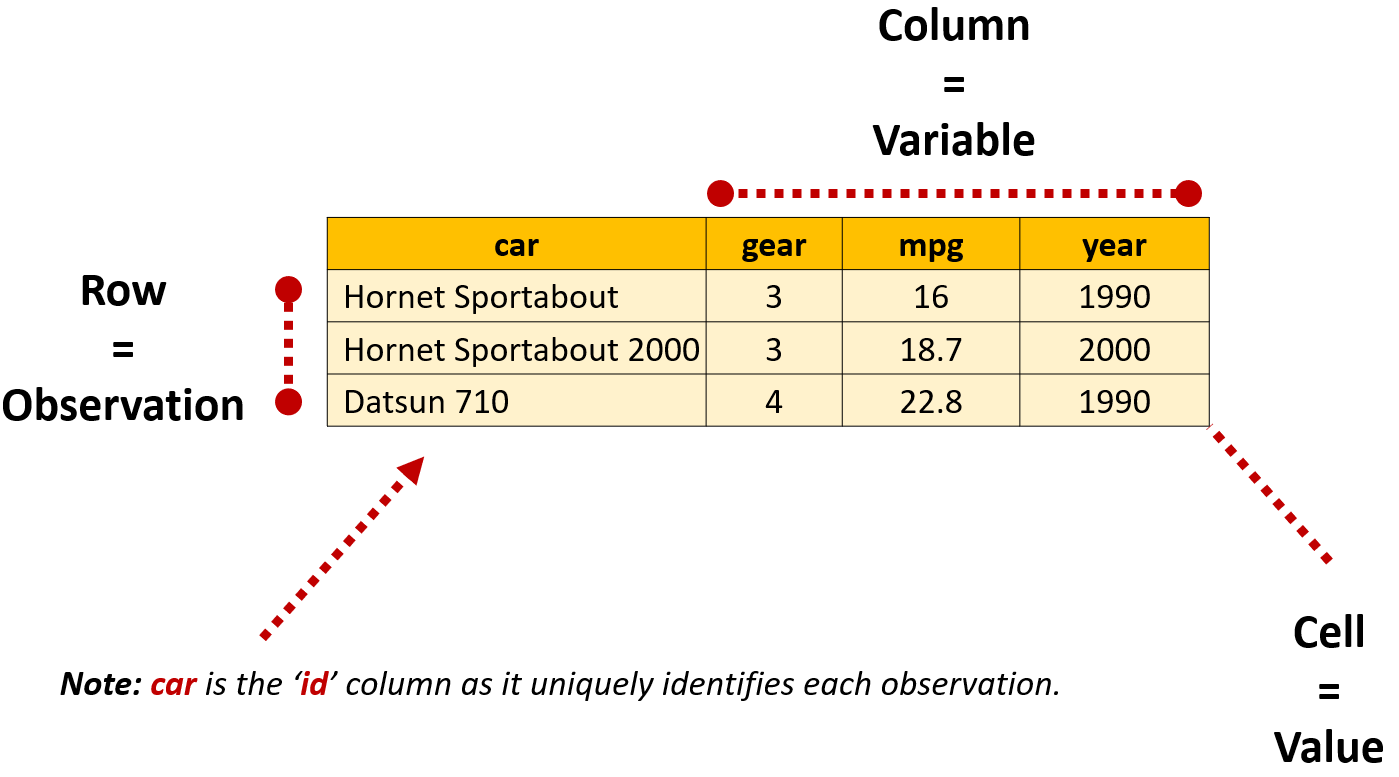

In R, dataframes are data tables that organize data in rows and columns, similar to a spreadsheet. When a dataframe is in a ‘tidy’ format, each row will present information about a single observation for each variable included in the dataframe. At the intersection of each row and column is a value, which is sometimes referred to as a single ‘cell’ or ‘element’.

Dataframes can be thought of as a collection of vectors with the same length that are stuck together. They are the most common data structured used when working with data in R:

#create vectors

dta_age <- c(34,42,19)

dta_names<-c("Mary","Kelvin","Susan")

dta_female<-c(TRUE, FALSE, TRUE)

#create a dataframe

dta_class<-data.frame(dta_names,dta_age,dta_female)Tibbles

You’ll also come across something called ‘tibbles’ throughout your R journey. Tibbles are a modern reimagining of the data.frame from the tidyverse that tries to keep what works and drop what doesn’t. In most instances when working with tidyverse packages tibbles are the preferred choice.

Tidy Data

We’ll talk more about different data shapes later in the book, but the key thing to remember is that R generally works best when our data is organized in a tidy format: where each row is an observation, each column is a variable and each cell a single value.

Classes

The ‘class’ of an element, vector or object refers to the characteristics and structure of information stored in an object. Classes are useful as they define how information should be stored, displayed and manipulated.

Although the objects were created in a similar way, they each have a distinct class based on the information they store. To see the class of an object you can use class(). Notice in the example below that the class of dta_female is ‘logical’ as the object is a vector containing logical values.

#create vectors

dta_age <- c(34,42,19)

dta_names<-c("Mary","Kelvin","Susan")

dta_female<-c(TRUE, FALSE, TRUE)

#create a dataframe

dta_class<-data.frame(dta_names,dta_age,dta_female)

#check the class of an object

class(dta_names) [1] "character"class(dta_female)[1] "logical"Sequences

The colon operator ‘:’ can be used to create sequences using the format from:to

For instance, ‘1:10’ when executed will return the numbers from one to ten, whereas 10:1 will return the same numbers in the opposite order.

# Output a sequence of numbers from 1 to 10

1:10 [1] 1 2 3 4 5 6 7 8 9 10# now from 20 to 3

20:3 [1] 20 19 18 17 16 15 14 13 12 11 10 9 8 7 6 5 4 3#and we can combine it with other values

pi:10[1] 3.141593 4.141593 5.141593 6.141593 7.141593 8.141593 9.141593It’s also possible to have R return more complicated sequences using seq() and rep(). For instance, if we wanted to return every second number between 0 and 20 we could use:

# Using seq() function

seq(from=0, to=20, by=2) [1] 0 2 4 6 8 10 12 14 16 18 20Or to repeat the number 3 twenty times we could use:

rep(3, times=20) [1] 3 3 3 3 3 3 3 3 3 3 3 3 3 3 3 3 3 3 3 3Aside from this being useful for creating simple vectors to play with, sequences are frequently used for performing more advanced tasks, such as individually importing individual files in a list.

Combining Elements

Concatenate

When you first come across R code in the wild you’re likely to come across the c(), which combines or concatenates values into a vector or list. For instance, dta_vector <- c(1,2,3,4) would combine the numbers 1 to 4 together to create the vector dta_vector.

We’ll often need to use c() when specifying multiple parameters. In the example below, notice how it has been used to specify which values should be considered as missing (NA) when importing an excel file:

# Specifies both blank cells and "99" as missing values

read_excel("my_data.xlsx", na = c("", "99")) paste()

paste() and paste0 also combine elements into a vector, but unlike c() they do this on an element-by-element basis. For instance, c(1:3,3:1) will combine the numbers in the order they are provided, whereas paste(1:3,3:1) will combine the numbers element-by-element, resulting in “1 3” “2 2” “3 1”.

Notice that paste() has also added a space between each number, which is the function’s default behavior unless we specify a different value for the sep argument. paste0() works in the same way as paste(), but does not add a deliminator between elements i.e. sep=““.

#create a simple string of numbers based on their ranking

ref_rank <- c(5,1,7)

#create a vector of names

ref_names<- c("James","Sarah","Emil")

#combine rank and names into combined text strings #set the seperator as a space using the sep argument

paste("Name:", ref_names,"Rank:",ref_rank, sep=" ") [1] "Name: James Rank: 5" "Name: Sarah Rank: 1" "Name: Emil Rank: 7" #Demonstrate difference from c()

c("Name:", ref_names,"Rank:",ref_rank)[1] "Name:" "James" "Sarah" "Emil" "Rank:" "5" "1" "7" Logical Operators

Logical operators allow us to test particular conditions are met, such as whether a survey respondent’s age is greater than 25. Whenever testing a logical condition (or set of conditions) R will either return TRUE, FALSE or NA based on the result of the test. For instance 11>=3 will return TRUE, 6>2 will return FALSE and 4>NA will return NA. When applied to a vector a result will be returned for each element, for instance 2:4>=3 will return FALSE TRUE TRUE

It’s also possible to test whether multiple conditions are met using & and |. The and operator (& ) tests whether all specifed conditions are met and the or operator (|) tests whether at least one condition is met. For instance 1:5>=3 & 1:5 <5 returns FALSE TRUE FALSE as a number must be greater or equal to three and less than five, where 2:4>=3 | 2:4 <1 returns FALSE TRUE TRUE as a number can meet either of the conditions.

| Operator | Description | Example | Result |

|---|---|---|---|

== |

Equal to | 5 == 5 |

TRUE |

!= |

Not equal to | 5 != 3 |

TRUE |

> |

Greater than | 5 > 3 |

TRUE |

< |

Less than | 5 < 3 |

FALSE |

>= |

Greater than or equal to | 5 >= 5 |

TRUE |

<= |

Less than or equal to | 5 <= 3 |

FALSE |

& |

AND | (5 > 3) & (5 < 7) |

TRUE |

| |

OR | (5 > 7) | (5 < 7) |

TRUE |

Logical operators will become increasingly handy for working with data and controlling how your code runs as you progress. When cleaning data this might mean dropping all values equal to NA or identifying unusual values that warrant further investigation. You might also want to test which tax thresholds apply to individuals in a modelling exercise. Or maybe you’d like to have your code behave differently based on whether a condition is met, such as by applying a different function depending on the sample size you’re working with.

Errors, Warnings and Messages

The more you experiment working with R, the more seemingly inscrutable messages you’re likely to encounter. Maybe you’ve tried to assign some data to an object, but have entered < instead f <-. Perhaps you’d like to import some data from an excel file, but have pointed R to the wrong place on your hard drive. Or you might have tried to apply the mean() function on a vector stored as characters, not numbers.

Whatever the case, R will usually let you know when something goes wrong with a set of obnoxious and sometimes inscrubtable errors, warnings or messages:

Errors: occur when R cannot execute the code you sent it. This usually means the code failed and no output was generated.

Warning: indicates that something in your code might not have worked as expected, so you should check its work.

Messages: are notes from a function reminding you of something important, such as its default behavior, missing values that have been introduced and/or how to interpret the results.

List adapted from: Travis Loux, 2024, R for the Uninitiated

Deciphering Errors

Newcomers are right to be confused by R’s seemingly indecipherable collection of errors, warnings and messages. Learning a programming language requires that we learn how to intepret strange symbols governed by a set of rules and logic designed for computers. If you were learning wizardry, mispronouncing a syllable might mean you accidentally conjure a toad. When you’re learning to program, using the wrong symbol or function might crash your computer.

Although I don’t want to take this magical analogy (sorry) too far, I find it to be an an apt way to describe what I found particularly frustrating when learning to program: having no idea what an error meant gave me no path for fixing it or knowing where to look for help.

The good news is that when you first start to program you’re likely to make the same mistakes and see the same types of messages. In fact, Noam Ross found this outwhen analyzing Stack Overflow questions, finding that a small number of errors account for the majority of messages you’re likely to get:

- “Could not find function” - Usually from typing the function name incorrectly or failing to load the package it comes from, such typing

Read.csv()instead ofread.csv()or referencing theggplot()function before loading the package vialibrary(ggplot2). - “Error in if” - Caused by non-logical data or missing values being provided when a condition is tested. A simple example of this is

if (NA > 5) print("Success!")asNAis returned by the test. - “Error in eval” - Results when a function tries to use an object it can’t find, such as a data object that hasn’t been loaded.

subset(iris, Species == nonexistent)produces this message. - “Cannot open” - Attempts to read inaccessible or non-existent files. An obvious example of this is

read.csv("A path that doesn't exist"), but you’re likely to encounter this when you’ve specified the wrong location for a file or if the file is open by another program. - “No applicable method” - Using a function on an unsupported data types. This is rarer, but usually occurs when a function is provided the wrong type of object, such as a list instead of a dataframe.

- “Subscript out of bounds” - Trying to access elements or dimensions that don’t exist, such as

pi[[2]]aspiis a single vector. - Object of type closure is not subsettable - such as when you try to use subsetting operators (like

[,[[, or$) on a function instead of a data structure. A simple example of this ismean[](which is trying to subset a function).

Adapted from: David Smith, 30/3/2015, The most common R error messages, Revolution Analytics

Importing Data

If you’re new to programming, even importing data can be a challenge. Unlike more traditional ‘point and click’ statistical software, you can’t simply click a data file to import it into R. Instead, you’ll need to provide the location of the file to a function that can read the data and save it as an object using <-.

While this can feel finicky at first, once you’ve found the function that you need importing data follows the same basic steps:

Load the library with the function you need to read the data;

Letting the function know where the file is stored on your computer; and

Executing the function while assigning the results to a named object.

The code below provides a simple example of this. Notice that the package haven is loaded so read_spss() can be used to read the SPSS file. The location of the file on the hard drive is then provided to read_spss() to import it and save it as an object called dta_affordable_housing.

library(haven)

dta_affordable_housing<-read_spss("C:/Working Directory/Data/Affordable_Housing_by_Town.sav")Note: the . symbol can also be used to refer to locations within the working directory, which can be specified separately. For instance, if the location of the working directory is set to C:/Working Directory/ the file path “./Data/Affordable_Housing_by_Town.sav" could be used. This just tells R that the affordable housing data file is located in a folder called ‘Data’ within the working directory.

Exploratory and Explanatory Analysis

Throughout this guide we’ll sometimes describe analysis as exploratory or explanatory.

Exploratory analysis describes the steps we take to understand a dataset and figure out how to answer our focus question(s). This might include understanding how data is organized, which variables are available and exploring relationships between variables. Outputs produced at the exploratory analysis stage are mainly meant for us, and others in our team, so don’t need to be in a format that an outside audience can understand.

Explanatory analysis entails the steps taken to answer our focus question(s) and share our results with an outside audience. This will often mean producing outputs that are suitable for an outside audience, such as by using high-quality plots, well-formatted statistical tables and/or interactive dashboards.

File Systems and Paths

A computer’s file system functions like a filing cabinet. Just as you might organize papers, documents and other files into a folder in your filing cabinet files on your computer are organized in a series of folders (or directories) on your hard drive.

Although their format will vary slightly depending on your operating system, their essential structure remains the same. For instance, if I have a file called “Analysis.R” in my “Project” folder its file path might be C:/Project/Analysis.R. This provides an address for the file and lets the computer know it’s located on the C drive in a folder called “Project”. We can also specify the location of a folder by excluding the name of a file. For example the file path C:/Stuff/Project 1/ specifies that the ‘Project 1’ folder is stored in another folder called ‘Stuff’ (or sub-directory).

Note: Windows typically shows file paths with backslashes (\), while R uses forward slashes (/). Don’t worry, they mean the same thing. Just make sure you use forward slashes when referring to the location of files and folders in R.

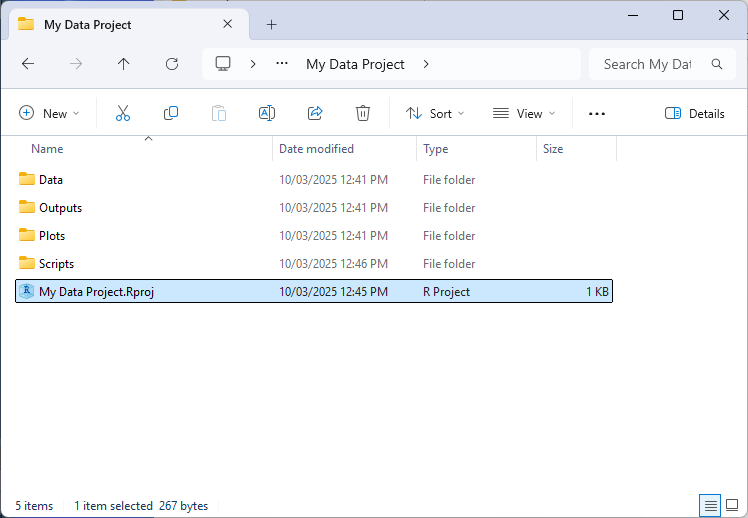

RStudio Projects

The folder R works from is called the working directory and usually needs to be correctly specified for your code to run. Although it’s a good idea to be familiar with how working directories work (more on that below), it’s generally easier to use RStudio Projects as this automatically sets the location of your working directory when you open it to wherever the RProject file is saved.

To create a new project select ‘File’ > ‘New Project’ via the RStudio file menu. A .Rproj file will then be saved in the directory you specify. Now, each time you open the project your working directory will automatically be set to the location of the .Rproj file.

Aside from being more convenient to have the working directory automatically set in this way, R projects allow us to specify relative file and directory paths when working with R (using ‘.’ in the file path). This feature is particularly handy when when collaborating with others on the same project or when working on the project across multiple machines (such as a home computer). As instead of reference absolute paths like “C:/My Files/Project 1/Data/my_data.csv”, you can simply make references relative to where the .Rproj file is stored e.g. “./Data/my_data.csv”. This makes code easier to read and also means it will work without needing to modify file paths or manually set working directories based on where the project folder resides on each system.

Working Directories

The working directory tells R where to open and save files on your hard drive. When starting R from a project its location will be set to the project folder. Otherwise, your working directory will be set to the default location (such as the ‘My Documents’ folder for Windows).

To return the current location of your working directory, use the command getwd(). To set the location of your working directory in R, use setwd("file_path"), where ‘file_path’ denotes the location of the folder you’re working from. You can also change the default location of your working directory using RStudio via: ‘Session’ > ‘Set Working Directory’.

Files can be loaded and saved files relative to the working directory. For instance, if we’ve set our working directory to ‘C:/My Data Project/’, we could import the data file using dta_data<-read.csv('./data.csv'), with R substituting ‘.’ with the location of the working directory.

Organizing Your Working Directory

Although you’re free to organize your analysis projects in a way that works for you, a good place to start is to organize your a working directory around a standardized structure, such as:

- ./Data/: For storing the original input data and processed versions of the data.

- ./Outputs/: Where results of analysis are stored, such as statistical summaries.

- ./Plots/: Which is used for saving any plots generated.

- ./Scripts/: For storing individual R scripts.

Applying a standardized structure like this will help keep your analysis project organized and make it easier to understand how everything fits together (both for your colleagues and you when you return to your analysis in the future).

Reading and Saving Data

Importing and saving files requires picking the right function for the job and specifying where to load or save the file. The code below provides a demonstration of this using the titanic data, with . letting R know the files should be loaded and saved within the working directory:

#load the titanic bio data

dta_titanic_passengers_bio<-read.csv("./Data/titanic bio data.csv")

#save the titanic data as an RDS file

saveRDS(dta_titanic_passengers_bio, "./Data/titanic bio data.rds")Also notice that we’ve provided saveRDS() with dta_titanic_passengers_bio so it knows what we’re asking it to save. saveRDS() has guessed dta_titanic_passengers_bio is the file we’d like it to save, but we can also specify this explicitly using saveRDS(object=dta_titanic_passengers_bio, "./Data/titanic bio data.rds")

Customizing Parameters

The behaviour of functions can often be customized by tweaking their parameters.

The options available for a function are listed in their documentation, which can be viewed by placing ? in front of the function in the console e.g. ?read_excel(). Take a look at Usage and Arguments in the help to get a sense of the parameters available for a function. Values specified after = represent the default value used when their value isn’t directly specified. As an example, skip = 0 indicates that read_excel() won’t skip any rows in an excel sheet unless we ask it to do so.

The code below presents a simple example of customizing parameters for read_excel(). By specifying sheet=1 read_excel() knows to read the data from the first sheet. Whereas na=c("","NA) tells read_excel() to consider cells that are empty or contain the value “NA” as missing:

#load the readxl package

library(readxl)

#view help for read_excel()

?read_excel()

#import data while customizing parameters

dta_titanic_ticket_data<-read_excel("./Data/titanic ticket data.xlsx",

sheet=1,

na=c("","NA"))Working With Common Data Formats

One of R’s many strengths is its ability to work with data stored in a variety of formats used by commercial software, such as Excel, SPSS, SAS and Stata files. The table below provides a summary of functions and packages that can be used to work with common data formats.

| Data Format | File Extension | Importing | Exporting | Required Package |

|---|---|---|---|---|

| CSV | .csv |

read.csv() or read_csv() |

write.csv() or write_csv() |

Base R or readr |

| Tab-delimited | .txt, .tsv | read_tsv() or read.delim() |

write_tsv() or write.table() |

readr or Base R |

| Excel | .xlsx, .xls |

read_excel() |

write_xlsx() |

readxl (import), writexl or openxlsx (export) |

| Stata | .dta |

read_dta() or read_stata |

write_dta() |

haven |

| SPSS | .sav, .por |

read_sav() or read_spss() |

write_sav() |

haven or foreign |

| SAS | .sas7bdat, .xpt |

read_sas() |

write_xpt() |

haven |

| R Data | .RData, .rda, .rds |

readRDS() |

save() or saveRDS() |

Base R |

Note: The functions listed below that use . for spaces, like read.csv() are available in Base R.

Looking at Your Data

The first step once you’ve imported your data you’ll need to get a sense of what you’re working with. At the outset this involves answering three questions: what is the structure and size of the data; what information does it contain; and are there any issues that need to be addressed prior to analysis?

Answering these questions when you first import the data into R will help you understand what steps need to be taken to get it read for analysis.

What is the Structure and Size of Your Data?

The str() function is a good place to start to understand how data is organized. In the example below str() indicates that dta_titanic_passengers_bio is a dataframe made up of 1,309 observations across 11 variables. The function also gives a hint about what the structure and class of each variable. For instance, int indicates that the survived variable is an integermade up of 1s and 0s.

#|output: true

#load the titanic bio data

dta_titanic_passengers_bio<-read.csv("./Data/titanic bio data.csv")

#take a look at the structure of the dataframe

str(dta_titanic_passengers_bio)'data.frame': 1309 obs. of 11 variables:

$ survived : int 1 1 0 0 0 1 1 0 1 0 ...

$ name : chr "Allen, Miss. Elisabeth Walton" "Allison, Master. Hudson Trevor" "Allison, Miss. Helen Loraine" "Allison, Mr. Hudson Joshua Creighton" ...

$ sex : chr "female" "male" "female" "male" ...

$ age : num 29 0.917 2 30 25 ...

$ sibsp : int 0 1 1 1 1 0 1 0 2 0 ...

$ parch : int 0 2 2 2 2 0 0 0 0 0 ...

$ ticket : chr "24160" "113781" "113781" "113781" ...

$ embarked : chr "S" "S" "S" "S" ...

$ boat : chr "2" "11" NA NA ...

$ body : int NA NA NA 135 NA NA NA NA NA 22 ...

$ home.dest: chr "St Louis, MO" "Montreal, PQ / Chesterville, ON" "Montreal, PQ / Chesterville, ON" "Montreal, PQ / Chesterville, ON" ...What Information Does Your Data Contain?

Before importing a dataset, understand how it was collected and how each variable is defined. This information is usually provided in a data dictionary, which explains what each variable means and what values it contains. If no data dictionary exists, it’s a good idea to contact the person and/or organization that created the data before analyzing it.

Although str() provides a good hint what information is available, it’s not designed to provide us a holistic picture of the structure and distribution of each variable. The skimr package’s skim() function and table() can provide a more holistic picture of your data.

The code below demonstrates this for the titanic data. Notice that the skim() function provides a set of information relevant to the class of each variable. For instance, we can see that the sex variable contains two unique values, which is what we might expect if passengers had been assigned as Male or Female. It also appears there is a lot of missing values for the boat variable, with missing values recorded for 823 of the 1,309 observations. The number of missing values has also been provided fro numeric variables alongside summary statistics that give us a sense of the range and distribution of values recorded.

#|output: true

#load the skimr package

library(skimr)

#Have R provide summary information for each variable

skim(dta_titanic_passengers_bio)| Name | dta_titanic_passengers_bi… |

| Number of rows | 1309 |

| Number of columns | 11 |

| _______________________ | |

| Column type frequency: | |

| character | 6 |

| numeric | 5 |

| ________________________ | |

| Group variables | None |

Variable type: character

| skim_variable | n_missing | complete_rate | min | max | empty | n_unique | whitespace |

|---|---|---|---|---|---|---|---|

| name | 0 | 1.00 | 12 | 82 | 0 | 1307 | 0 |

| sex | 0 | 1.00 | 4 | 6 | 0 | 2 | 0 |

| ticket | 0 | 1.00 | 3 | 18 | 0 | 929 | 0 |

| embarked | 2 | 1.00 | 1 | 1 | 0 | 3 | 0 |

| boat | 823 | 0.37 | 1 | 7 | 0 | 27 | 0 |

| home.dest | 564 | 0.57 | 5 | 50 | 0 | 369 | 0 |

Variable type: numeric

| skim_variable | n_missing | complete_rate | mean | sd | p0 | p25 | p50 | p75 | p100 | hist |

|---|---|---|---|---|---|---|---|---|---|---|

| survived | 0 | 1.00 | 0.38 | 0.49 | 0.00 | 0 | 0 | 1 | 1 | ▇▁▁▁▅ |

| age | 263 | 0.80 | 29.88 | 14.41 | 0.17 | 21 | 28 | 39 | 80 | ▂▇▅▂▁ |

| sibsp | 0 | 1.00 | 0.50 | 1.04 | 0.00 | 0 | 0 | 1 | 8 | ▇▁▁▁▁ |

| parch | 0 | 1.00 | 0.39 | 0.87 | 0.00 | 0 | 0 | 0 | 9 | ▇▁▁▁▁ |

| body | 1188 | 0.09 | 160.81 | 97.70 | 1.00 | 72 | 155 | 256 | 328 | ▇▇▇▅▇ |

The table() function can be helpful for getting a sense of how values are distributed for categorical data. In the case of the sex variable, skim() indicated that it contained two unique values, but not what these values were. table() can be used to produce frequency tables to explore this:

Note: $ is used for accessing a particular variable within the dataframe by name.

#|output: true

#Count the number of observations for each unique value of the sex variable

table(dta_titanic_passengers_bio$sex)

female male

466 843 #Count the number of observations for each unique value of the survived variable

table(dta_titanic_passengers_bio$survived)

0 1

809 500 #Count the sex of passengers vs whether they survived

table(dta_titanic_passengers_bio$sex,

dta_titanic_passengers_bio$survived)

0 1

female 127 339

male 682 161Are There Any Issues That Need to be Addressed Prior to Analysis?

The reality of working with real world data is that data cleaning and wrangling is an iterative process that occurs throughout your analysis as you discover new issues and refine your questions. However, based on our brief exploration of the data I can already see some issues that might need to be addressed:

The survived variable uses 1 or 0 to indicate whether a passenger survived. Converting these to logical values (

TRUEandFALSE) would make this variable easier to interpret.The sex of passengers could be capitalized for better presentation in tables and plots.

Editing Data

Data is edited in R by running commands that change the values stored in an object. The basic pattern is simple: old_data<- new_data

Replace Values By Location

The code below shows how to replace one entry based on where it sits in your dataset. Here, dta_titanic_passengers_bio$sex[3] tells R to find row 3 in the sex column. The <- "Female" part tells R what to put there instead.

#load the titanic bio data

dta_titanic_passengers_bio<-read.csv("./Data/titanic bio data.csv")

#replace the third value of the sex variable with "Female" (instead of "female")

dta_titanic_passengers_bio$sex[3]<-"Female"Replace Values By Logical Test

More often, you’ll want to change many values at once across an entire column. The code below capitalizes all entries in the sex column using the toTitleCase() function:

library(tools)

dta_titanic_passengers_bio$sex<-toTitleCase(dta_titanic_passengers_bio$sex)Having to edit data in this way has an important advantage: it encourages reproducibility, so each time you (or someone else) runs your code they can get identical results. Creating reproducible analysis is an important pre-requisite for good policy analysis. But, it also protects you: as you’ll have a permanent record in your code of any adustments you made to a dataset and why it was necessary.

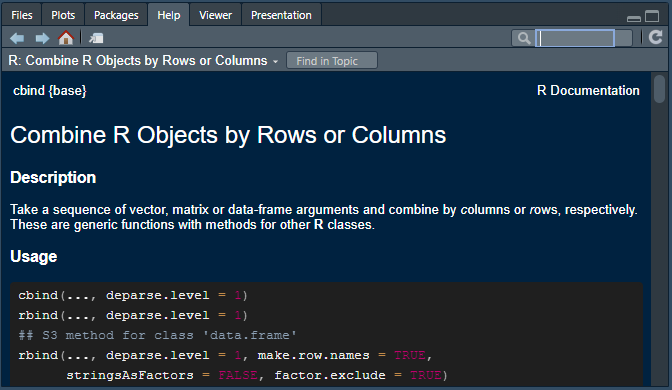

Getting Help

You can learn more about how a function works by adding ? to the front of the function name and executing the command in R. For example, to see the in-built documentation for the rbind() function you can execute ?rbind in the R console. When asking for documentation on operators make sure to enclose it in apostrophes or quotes e.g. ?"+", ?'%in%', ?'|>' etc. You can also search using the search function on the ‘Help’ tab of the explorer pane.

Each help file generally has the same basic format, with the purpose, logic and options available for a function being organized across the following sections:

Description: Provides a brief summary of what a function does.

Usage: Shows you how a function is structured and the defaults for arguments (specified next to ‘=’).

Arguments: Lists all the parameters and arguments the function accepts and explains what each one does. For rbind ‘…’ just means the function can take multiple dataframes, matrices or vectors.

Details: Provides a deeper explanation of how the function works.

Value: Explains what the function returns (outputs). Essential for understanding what you’ll get back when you use the function.

Examples: Shows practical examples of how to use the function with example code. Examples will typically also include any dataframes relevant for reproducing the provided examples.

See Also: Lists related functions.

References: Points to technical literature or sources relevant to the function.

My recommendation is to read R’s help like you might a recipe: focus on the most important sections first and dig into the details as needed. In practice, my suggestion is to start with the Description, Usage and Arguments to get a feel for what the function does, how it’s structured and the options available.

From there, my advice is to go straight to Examples to see how it works. From here you can also copy the code and adjust it to suit your needs.

Vignettes

Some packages also come with their own ‘vignettes’, which provide a general explanation of what the package and its functions do. To view a vignette for a package you can use vignette(‘package_name’). You can also see a list of vignettes available by executing vignette() without mentioning a package.

If you’d like to see how base R and tidyverse approaches compare, you can view a helpful comparison by typing vignette('base') in R.

Handling Errors

Whether you’re a novice or experienced programmer, knowing how to track down, correct and avoid making errors is an important skill: both when designing a methodology and when creating the analysis recipe that executes your plan.

Code errors: in some cases a piece of code might not work, resulting in an error message, such as :

When we’ve forgotten to include a closing bracket at the end of a function.

If we’ve forgot to include ” at the start and end of a string we’re defining.

When we’ve used the wrong capitalization when referencing an object, function or package.

Approach errors: sometimes our code might run perfectly, but it still includes an error.

Maybe we’re interested in calculating the average age of people in our data, but have told R to calculate the median.

Perhaps we want to produce a graph showing the relationship between a person’s height and weight, but have accidentally selected the shoe-size variable.

Data errors: even if our code runs perfectly and our analysis recipe is correct, bad data can mean our results are wrong. This is commonly referred to as the ‘garbage in, garbage out’ (GIGO) problem. For instance:

Maybe missing values are recorded as ‘-9’ in our source data, rather than ‘NA’.

Perhaps the data includes redundant values, such as reporting total population at the national and state level, resulting in us counting people twice in our analysis.

Perhaps there are values that make little sense: such as somebody with a recorded age of 241.

Note: although fatal errors that produce an error message may be discouraging, explicit failures have the advantage of being easier to spot, rectify, and learn from, because R immediately brings the issue to our attention. On the other hand, methodological and data quality errors can be much more dangerous as R will happily (and silently) produce incorrect results if your approach is flawed or your data contains undetected problems.

For this reason, developing good data cleaning and verification practices is essential. Including by checking and visually examining your results at each stages of analysis, implementing sanity checks and seeking peers to review and validate your work.

Strategies for avoiding and troubleshooting errors

Start from the begining: Since R executes code sequentially from top to bottom, adopt a methodical debugging process by trying to run the first ten percent of your code and only moving to the next section once it works (and so on).

Setting Your Working Directory: R needs to know where to look when loading and saving files. You can do this manually be executing the setwd() function, but it recommended you crearte a new R Projects in the directory you’re working from.

Control flow and logic: Remember that to reference an object it needs to exist in R. For instance, before we can produce a plot from a dataset we need to import it into R and assign it an object name using ‘<-’ first.

Spelling and case sensitivity: packages, functions and objects are case sensitive in R, which means it considers max() and MAX() to be completely different functions.

Object Assignment: Use‘<-‘ when assigning values to an object. A good way to think of this is ’<-‘ sends values from the right to whatever object the arrow is pointing to.

Finishing what you started: When starting a function, make sure to end it with ’)“. Similarly, when including a text string in code, make sure to finish and end it with” or ’.

Pause between arguments: remember to separate each argument withing a function with a comma so R knows they’re seperate e.g. mean(dta_vector, rm.na=TRUE).

Referencing variables: Some functions are fussy with how you reference variables and will return an error if you do it in a way they don’t like. For instance, even though dta_example$var_1 and dta_example[, “var_1”] reference the same column, certain functions will only accept one format or the other.

Try to apply logic checks to your analysis: a good approach for identifying potential issues with your analysis is to identify conditions that should be met if the data, code and analysis is correct, for instance:

If we’re estimating the cost of building more schools, the cost should probably be somewhere between zero and the budget available for education (and likely close to what has been spent for similar programs in the past)

If we are calculating a state or region’s population, when we add them together they should be close to the national population

If we are interested in examining how outcomes differ across different sexes we might want to make sure a person’s sex has been consistently recorded across the dataset e.g. not “Male”, “M” and “Man”.

Data Cleaning and Wrangling

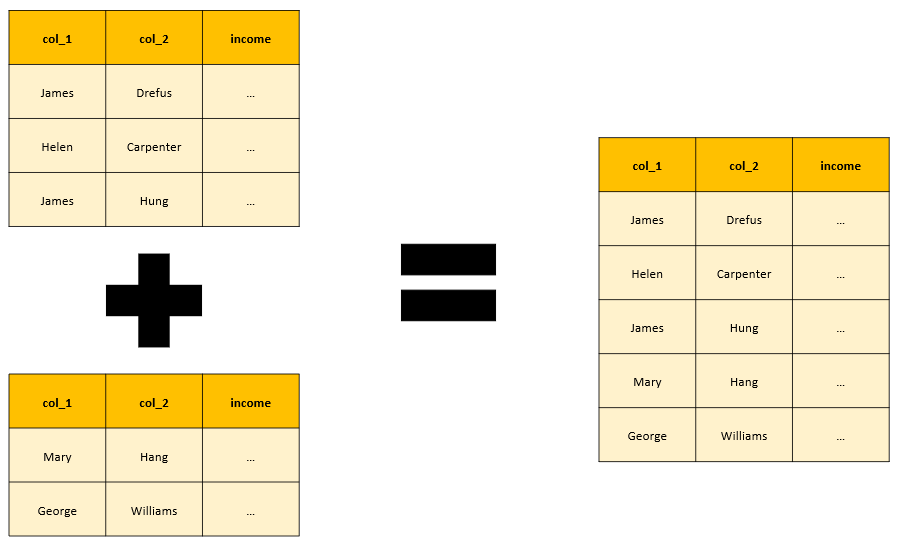

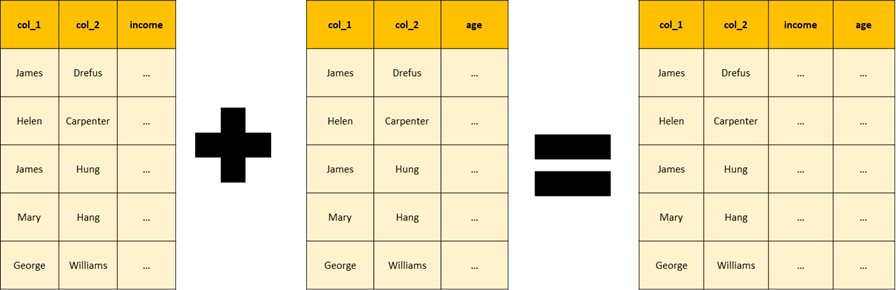

Data cleaning and wrangling is about transforming a raw dataset into a format and structure that’s suitable for analysis, modeling and visualization. Although both terms are used interchangeably and describe similar steps, for the sake of clarity: